Concepts¶

FeatureByte Catalog¶

A FeatureByte Catalog operates as a centralized repository for organizing tables, entities, features, and feature lists and other objects to facilitate feature reuse and serving.

By employing a catalog, team members can effortlessly share, search, retrieve, and reuse these assets while obtaining comprehensive information about their properties.

Create multiple catalogs for data warehouses covering multiple domains to maintain clarity and easy access to domain-specific metadata.

SDK Reference

Refer to the Catalog object main page or to the specific links:

- list catalogs,

- create a catalog,

- get the currently active catalog,

- activate a catalog,

- list tables, entities, features or feature lists in a catalog,

- and retrieve a table, an entity, a feature or a feature list from a catalog.

User Interface

Learn by example with our 'Create Catalog' UI tutorials.

Source Table and Special Columns¶

Data Source¶

A Data Source object in FeatureByte represents a collection of source tables that the feature store can access. From a data source, you can:

- Retrieve the list of databases available

- Obtain the list of schemas within the desired database

- Access the list of source tables contained in the selected schema

- Retrieve a source table for exploration or registering it in the catalog.

SDK Reference

Refer to the DataSource object main page or to the specific links:

User Interface

Learn by example with our 'Register Tables' UI tutorials.

Source Table¶

A Source Table in FeatureByte is a table of interest that the feature store can access and is located within the data warehouse.

To register a Source Table in a FeatureByte catalog, first determine its type. There are four supported types: event table, item table, dimension table and slowly changing dimension table.

Note

Registering a table according to its type determines the types of feature engineering operations that are possible on the table's views and enforces guardrails accordingly.

To identify the table type and collect key metadata, Exploratory Data Analysis (EDA) can be performed on the source table. You can obtain descriptive statistics, preview a selection of rows, or collect additional information on their columns.

SDK Reference

Refer to the SourceTable object main page or to the specific links:

- list source tables in a data source,

- retrieve a source table from a data source,

- obtain descriptive statistics,

- and preview a selection of rows,

User Interface

Learn by example with our 'Register Tables' UI tutorials.

Primary key¶

A Primary Key is a column or set of columns uniquely identifying each record (row) in a table.

The primary key is used to enforce the integrity of the data and ensure no duplicate records in the table. The primary key must satisfy the following requirements:

- Unique: Each record in the table must have a unique primary key value.

- Non-null: The primary key cannot be null (empty) for any record.

- Stable: The primary key value should not change over time.

Four types of primary keys can be found in FeatureByte tables:

- Event ID: The primary key in Event table.

- Item ID: The primary key in Item table.

- Dimension ID: The primary key in Dimension table.

- Surrogate key: The primary key in Slowly Changing Dimension (SCD) table.

Event ID¶

An event ID serves as the primary key of the Event table. An event ID in such a context entails:

-

Uniqueness: The event ID is unique for each row, ensuring that each business event recorded in the table can be distinctly identified. No two rows in the table will have the same event ID.

-

Representation of Business Events: Each row in the event table represents a business event. A business event could be anything significant to the business that needs to be recorded, like a transaction, a customer interaction, a system failure, etc.

-

Time Association: Along with the event ID, the table will typically include a timestamp, the event timestamp, indicating when the event occurred.

Item ID¶

An item ID, serving as the primary key in an Item table, plays a crucial role in organizing and relating detailed information about specific business events. An item ID in such a context entails:

-

Uniqueness: The item ID is unique for each row, ensuring that each item can be distinctly identified and accessed.

-

Detailed Event Information: While the event table records each occurrence of a business event with a timestamp, the item table delves into the specifics of these events. For instance, in a retail context, if the Event Table records a sale, the Item Table would list the individual products (items) that were part of that sale.

-

Implicit Time Link: Although the item table itself might not include a timestamp, its linkage to the event table, which does have a timestamp, implicitly associates each item with the time of the event. For example, a product item's details in the item table are connected to the timestamp of the sale event in the event Table.

-

One-to-Many Relationship with event ID: The item ID typically has a one-to-many relationship with the event ID. This means that one event ID (like a customer order) can correspond to multiple item IDs (various products in that order).

Example

Depending on the business context, the Item Table could include:

-

For product items in customer orders: Product ID, name, quantity, price, category, and other relevant details.

-

For drug prescriptions in doctor visits: Drug ID, name, dosage, frequency, prescribing doctor, and other pertinent information.

Dimension ID¶

A Dimension ID serves as the primary key in a Dimension table. This means it uniquely identifies each record or row in the table. Unlike event tables that typically store quantitative data (like sales figures, quantities), dimension tables store statitc qualitative information. Dimension IDs should be unique and stable over time. This ensures that historical data remains consistent and reliable.

Example

A product dimension table would store details about products, and each product would have a unique Dimension ID.

Surrogate key¶

In a Slowly Changing Dimension (SCD) table, a surrogate key is a unique identifier assigned to each record. It is used to provide a stable identifier even as the table changes over time.

Example

Consider a table that keeps track of customer addresses over time, known as a Slowly Changing Dimension (SCD) table. When a customer updates their address, a new record with the updated address is added rather than modifying the existing record. To uniquely identify each record, a surrogate key is used as the primary key. Additionally, an effective timestamp is included to indicate when the address change occurred.

In this table, the Customer ID acts as the natural key, connecting records to a specific customer. The Customer ID alone does not guarantee uniqueness, as customers may have multiple addresses throughout time. But, each Customer ID is linked to only one address for a specific time period, enabling the table to preserve historical data.

Natural key¶

In a Slowly Changing Dimension (SCD) table, a natural key (also called alternate key) is a column that remains constant over time and uniquely identifies each active row in the table at any point-in-time.

This key is crucial in maintaining and analyzing the historical changes made in the table.

Example

Consider a SCD table providing changing information on customers, such as their addresses. The customer ID column of this table can be considered a natural key since:

- it remains constant

- uniquely identifies each customer

A given customer ID is associated with at most one address at a particular point-in-time, while over time, multiple addresses can be associated with a given customer ID.

Foreign key¶

A Foreign Key is a column or a group of columns in one table that refers to the primary key in another table. It establishes a relationship between two tables.

Example

An example of foreign key is Customer ID in an Orders table, which links it to the Customer table where Customer ID is the natural key.

Special Timestamp columns¶

Event Timestamp¶

The event timestamp column in an Event table records the exact time at which a specific event occurred.

Effective Timestamp¶

The Effective Timestamp column in a Slowly Changing Dimension (SCD) table specifies the time when the record becomes active or effective.

Example

If a customer changes their address, the effective timestamp would be the date when the new address becomes active.

Expiration Timestamp¶

The Expiration (or end) Timestamp column in a Slowly Changing Dimension (SCD) table specifies the time when the record is no longer valid or active.

Example

If a customer changes their address, the expiration timestamp would be when the old address is no longer valid.

Note

While this column is useful for data management, it cannot be used for feature engineering as it is related to information unknown during the inference time and may cause time leakage. For this reason, the column is discarded by default when views are generated from tables.

Record Creation Timestamp¶

A Record Creation Timestamp refers to the time when a particular record was created in the data warehouse. The record creation timestamp is usually automatically generated by the system when the record is first created, but a user or an administrator can manually set it.

Note

While this column is useful for data management, it is usually not used for feature engineering as it is sensitive to changes in data management that are usually unrelated to the target to predict. This also may cause feature drift and undesirable impact on predictions. For this reason, the column is discarded by default when views are generated from tables.

The information is, however, used to analyze the data availability and freshness of the tables to help with the configuration of their default feature job setting.

Time Zone Offset¶

A time zone offset, also known as a UTC offset, is a difference in time between Coordinated Universal Time (UTC) and a local time zone. The offset is usually expressed as a positive or negative number of hours and minutes relative to UTC.

Example

If the local time is 3 hours ahead of UTC, the time zone offset would be represented as "+03:00". Similarly, if the local time is 2 hours behind UTC, the time zone offset would be represented as "-02:00".

Note

When you register an Event table, you can specify a separate column that provides the time zone offset information. By doing so, all date parts transforms in the event timestamp column will be based on the local time instead of UTC.

The required format for the column is "(+|-)HH:mm".

Timestamp with Time Zone Offset¶

The Snowflake data warehouse supports a timestamp type with time zone offset information (TIMESTAMP_TZ). FeatureByte recognises this timestamp type and date parts for columns or features using timestamp with time zone offset are based on the local time instead of UTC.

Important

Timestamp columns that are stored without time zone offset information are assumed to be UTC timestamps.

Active Flag¶

The Active Flag (also known as Current Flag) column in a Slowly Changing Dimension (SCD) table is used to identify the current version of the record.

Example

If a customer changes their address, the active flag would be set to 'Y' for the new address and 'N' for the old address.

Note

While this column is useful for data management, it cannot be used for feature engineering as the value changes overtime and may differ between training and inference time. It may cause time leakage. For this reason, the column is discarded by default when views are generated from tables.

FeatureByte Tables¶

Table¶

A Table in FeatureByte represents a source table and provides a centralized location for metadata for that table. This metadata determines the type of operations that can be applied to the table's views.

Important

A source table can only be associated with one active table in the catalog at a time. This means that the active table in the catalog is the source of truth for the metadata of the source table. If a table in the catalog becomes deprecated, it can be replaced with a new table in the catalog that has updated metadata.

Table Registration¶

To register a table in a catalog, determine its type first. The table’s type will determine the types of feature engineering operations possible on the table's views and enforces guardrails accordingly. Currently, FeatureByte recognizes four table types:

- Event Table: a table where each row indicates a unique business event occurring at a particular time.

- Item Table: a table containing detailed information about a specific business event.

- Slowly Changing Dimension Table (SCD): a table containing data that changes slowly and unpredictably over time.

- Dimension Table: a table containing static descriptive data.

Two additional table types, Regular Time Series and Sensor data, will be supported shortly.

Optionally, you can include additional metadata at the column level after creating a table to support feature engineering further. This could involve tagging columns with related entity references, updating column description, tagging semantics or defining default cleaning operations.

SDK Reference

Refer to the Table object main page or to the specific links:

User Interface

Learn by example with our 'Register Tables' UI tutorials.

Event Table¶

An Event Table represents a table in the data warehouse where each row indicates a unique business event occurring at a particular time.

Examples

Event tables can take various forms, such as

- An Order table in E-commerce

- A Credit Card Transactions table in Banking

- Doctor Visits in Healthcare

- Clickstream on the Internet.

To create an Event Table in FeatureByte, it is necessary to identify two important columns in your data: the event ID and the event timestamp. The event ID is a unique identifier for each event, while the timestamp indicates when the event occurred.

Note

If your data warehouse is a Snowflake data warehouse, FeatureByte accepts timestamp columns that include time zone offset information.

For timestamp columns without time zone offset information or for non-Snowflake data warehouses, you can specify a separate column that provides the time zone offset information. By doing so, all date parts transforms in the event timestamp column will be based on the local time instead of UTC.

Additionally, the column that represents the record creation timestamp may be identified to enable an automatic analysis of data availability and freshness of the source table. This analysis can assist in selecting the default feature job setting that defines the scheduling of the computation of features associated with the Event table.

SDK Reference

Refer to the Table object main page or to the specific links:

User Interface

Learn by example with our 'Register Tables' UI tutorials.

Item Table¶

An Item Table represents a table in the data warehouse containing detailed information about a specific business event.

Examples

An Item table may contain information about:

- Product Items purchased in Customer Orders

- or Drug Prescriptions issued during Doctor Visits by Patients.

Typically, an Item table has a 'one-to-many' relationship with an Event table. Despite not explicitly including a timestamp, it is inherently linked to an event timestamp through its association with the Event table.

To create an Item Table, it is necessary to identify the columns that represent the item ID and the event ID and determine which Event table is associated with the Item table.

SDK Reference

How to register an item table.

User Interface

Learn by example with our 'Register Tables' UI tutorials.

Slowly Changing Dimension (SCD) Table¶

An SCD Table represents a table in a data warehouse that contains data that changes slowly and unpredictably over time.

There are two main types of SCD Tables:

- Type 1: Overwrites old data with new data

- Type 2: Maintains a history of changes by creating a new record for each change.

FeatureByte only supports using Type 2 SCD Tables since Type 1 SCD Tables may cause data leaks during model training and poor performance during inference.

A Type 2 SCD Table utilizes a natural key to distinguish each active row and facilitate tracking of changes over time. The SCD table employs effective and end (or expiration) timestamp columns to determine the active status of a row. In certain instances, an active flag column may replace the expiration timestamp column to indicate whether a row is active.

Example

Here is an example of a Type 2 SCD table for tracking changes to customer information:

| Customer ID | First Name | Last Name | Address | City | State | Zip Code | Valid From | Valid To |

|---|---|---|---|---|---|---|---|---|

| 123456 | John | Smith | 123 Main St | San Francisco | CA | 12345 | 13/01/2019 11:00:00 | 16/03/2021 10:00:00 |

| 123456 | John | Smith | 456 Oak St | Oakland | CA | 67890 | 16/03/2021 10:00:00 | NULL |

| 789012 | Jane | Doe | 789 Maple Ave | New York City | NY | 34567 | 15/09/2020 10:00:00 | NULL |

In this example, each row represents a specific version of customer information. The customer entity is identified by the natural key "Customer ID". If a customer's information changes, a new row is added to the table with the updated information, along with an effective timestamp ("Valid From" column) and end timestamp ("Valid To" column) to indicate the period during which that version of the information was active. The end timestamp is NULL for the current version of the information, indicating that it is still active.

For example, the customer with ID 123456 initially had an address of 123 Main St in San Francisco, CA, but then changed his address to 456 Oak St in Oakland, CA on 16/03/2021. This change is reflected in the SCD table by adding a new row with the updated address and Valid From of 16/03/2021 10:00:00, and a Valid To with the same timestamp for the previous version of the address.

To create an SCD Table in FeatureByte, it is necessary to identify columns for the natural key, effective timestamp, optionally surrogate key, end (or expiration) timestamp, and active flag.

SDK Reference

How to register a SCD table.

User Interface

Learn by example with our 'Register Tables' UI tutorials.

Dimension Table¶

A Dimension Table represents a table in the data warehouse containing static descriptive data.

Important

Using a Dimension table requires special attention. If the data in the table changes slowly, it is not advisable to use it because these changes can cause significant data leaks during model training and adversely affect the inference performance. In such cases, it is recommended to use a Type 2 Slowly Changing Dimension table that maintains a history of changes.

To create a Dimension Table in FeatureByte, it is necessary to identify which column represents its primary key, also referred in FeatureByte as the dimension ID.

SDK Reference

How to register a dimension table.

User Interface

Learn by example with our 'Register Tables' UI tutorials.

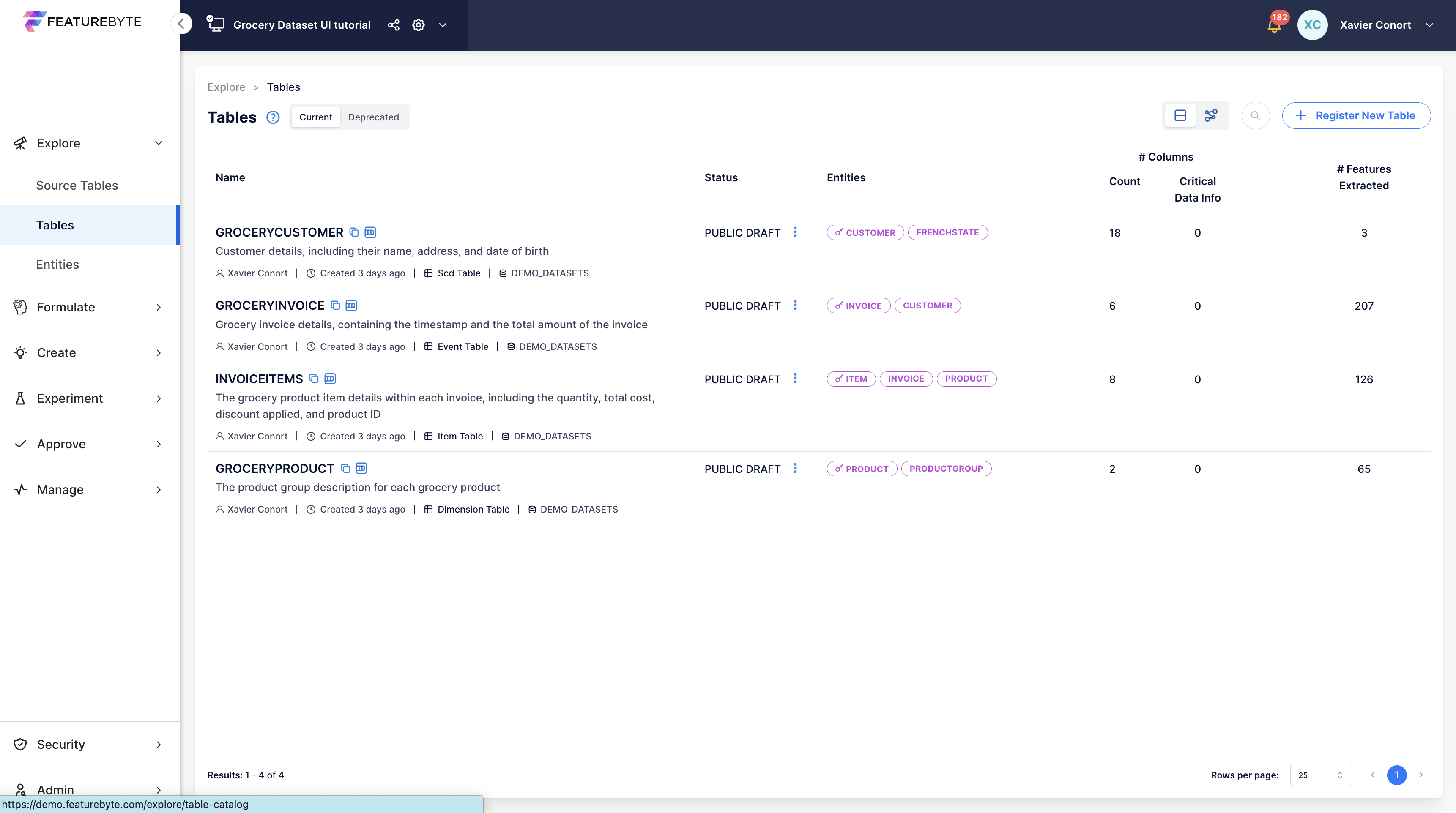

Table Status¶

When a table is registered in a catalog, its status is set to 'PUBLIC_DRAFT' by default. Once the table is prepared for feature engineering, you can modify its status to 'PUBLISHED'. If a table needs to be deprecated, you can update its status to 'DEPRECATED'.

User Interface

Learn by example with our 'Manage feature life cycle' UI tutorials.

Table Columns Metadata¶

Table Column¶

A Table Column refers to a specific column within a table. You can add metadata to the column to help with feature engineering, such as tagging the column with entity references, updating column description, tagging semantics or defining default cleaning operations.

SDK Reference

Refer to the TableColumn object main page or to the specific links:

- update_description,

- tag an entity to a column,

- obtain descrpitive statistics for a column,

- and specify default cleaning operations.

User Interface

Learn by example with our 'Add descriptions and Tag Semantics' and Set Default Cleaning Operations UI tutorials.

Entity Tagging¶

The Entity Tagging process involves identifying the specific columns in tables that identify or reference a particular entity.

These columns are typically primary keys, natural keys, or foreign keys of the table, but not necessarily.

Example

Consider a database for a company that consists of 2 SCD tables: one table for employees and one table for departments. In this database,

- the natural key of the employees table identifies the Employee entity.

- the natural key of the department tables identifies the Department entity.

- the employees table may also have a foreign key column referencing the Department entity.

SDK Reference

User Interface

Learn by example with our 'Register Entities' UI tutorials.

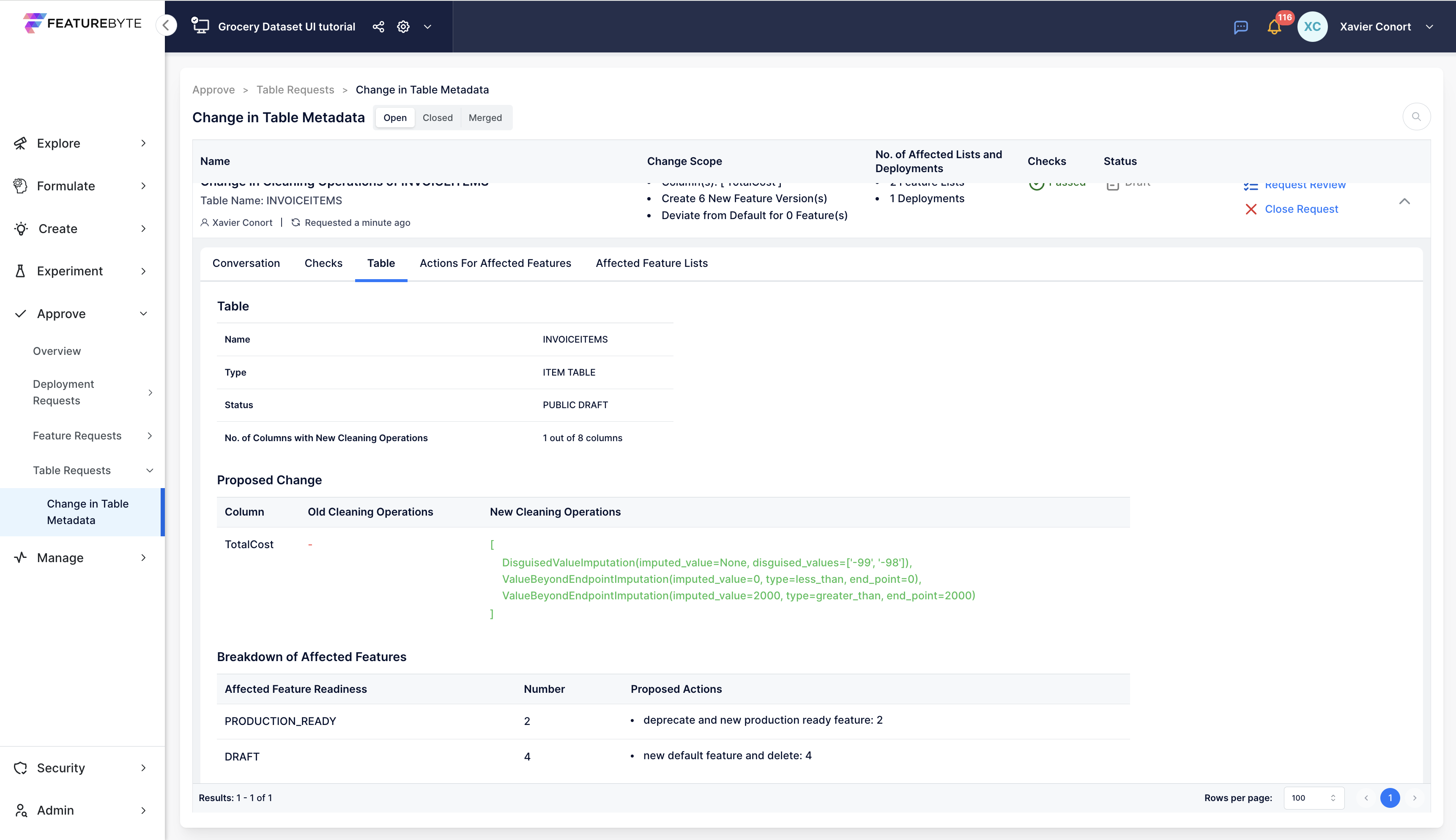

Cleaning Operations¶

Cleaning Operations determine the procedure for cleaning data in a table column before performing feature engineering. The cleaning operations can either be set as a default operation in the metadata of a table column or established when creating a view in a manual mode.

These operations specify how to manage the following scenarios:

- Missing values

- Disguised values

- Values that are not in an anticipated list

- Numeric values and dates that are out of boundaries

- String values when numeric values are expected

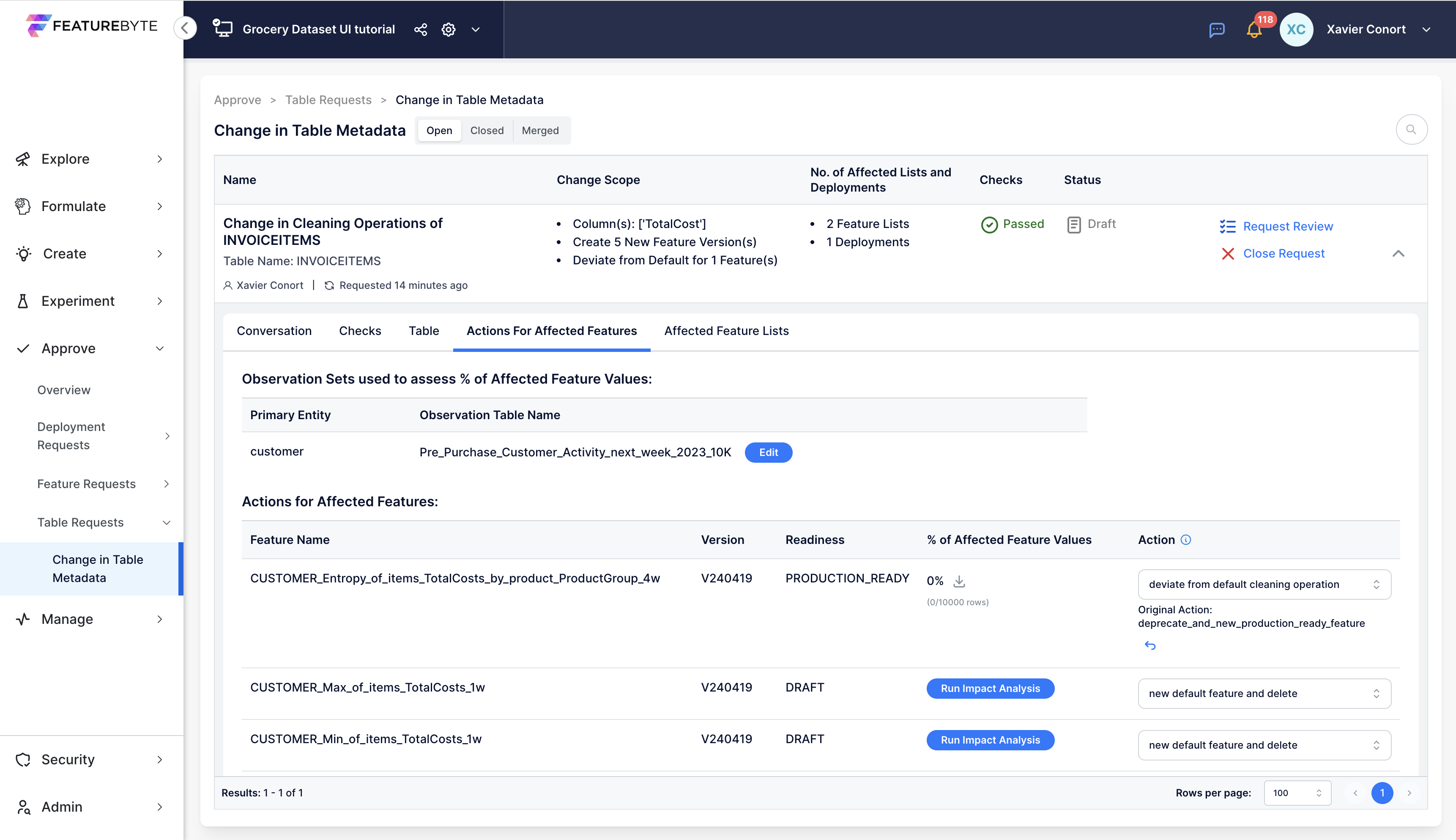

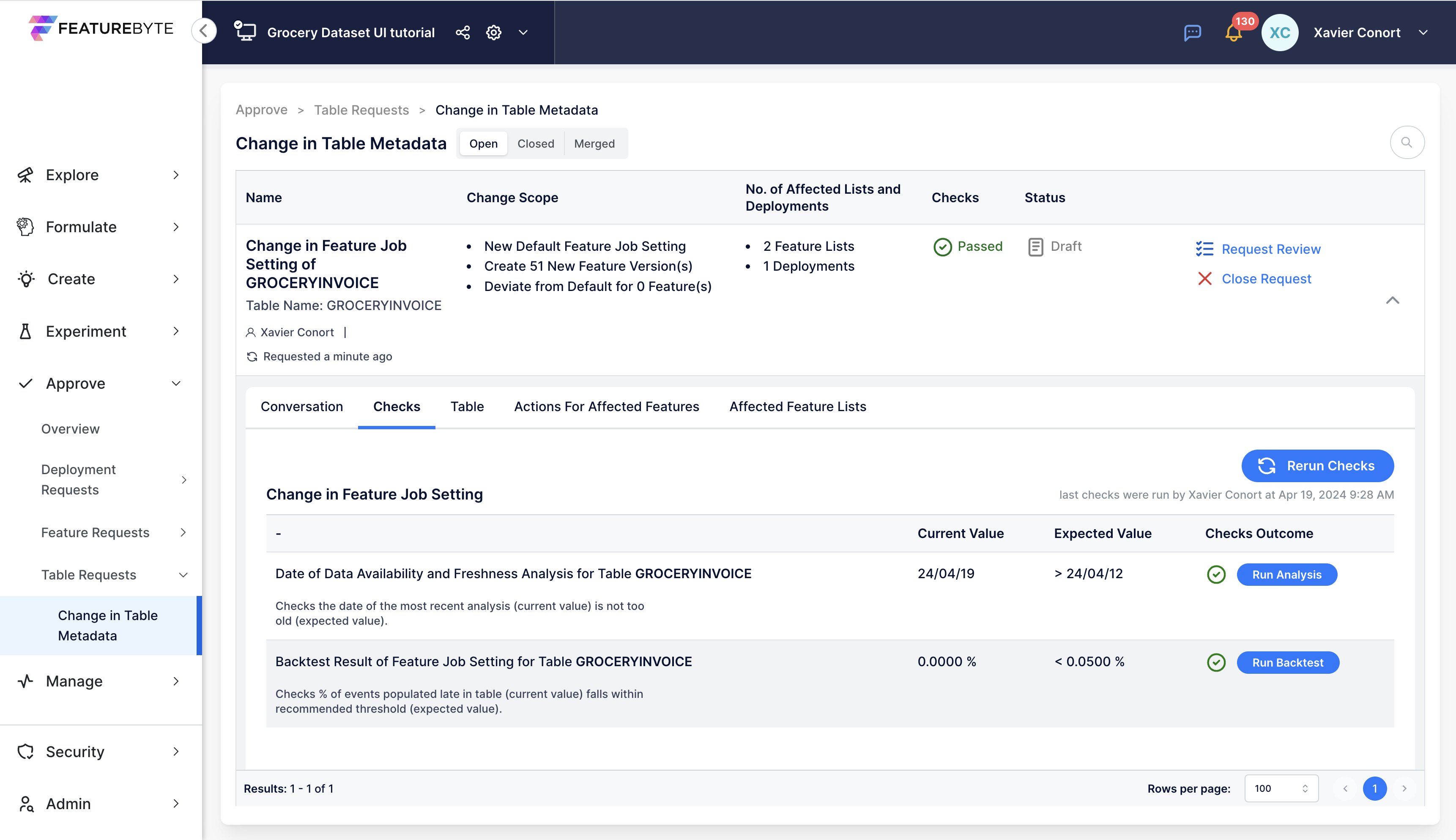

If changes occur in the data quality of the source table, new versions of the feature can be created with new cleaning operations that address the new quality issues.

Important Note for FeatureByte Enterprise Users

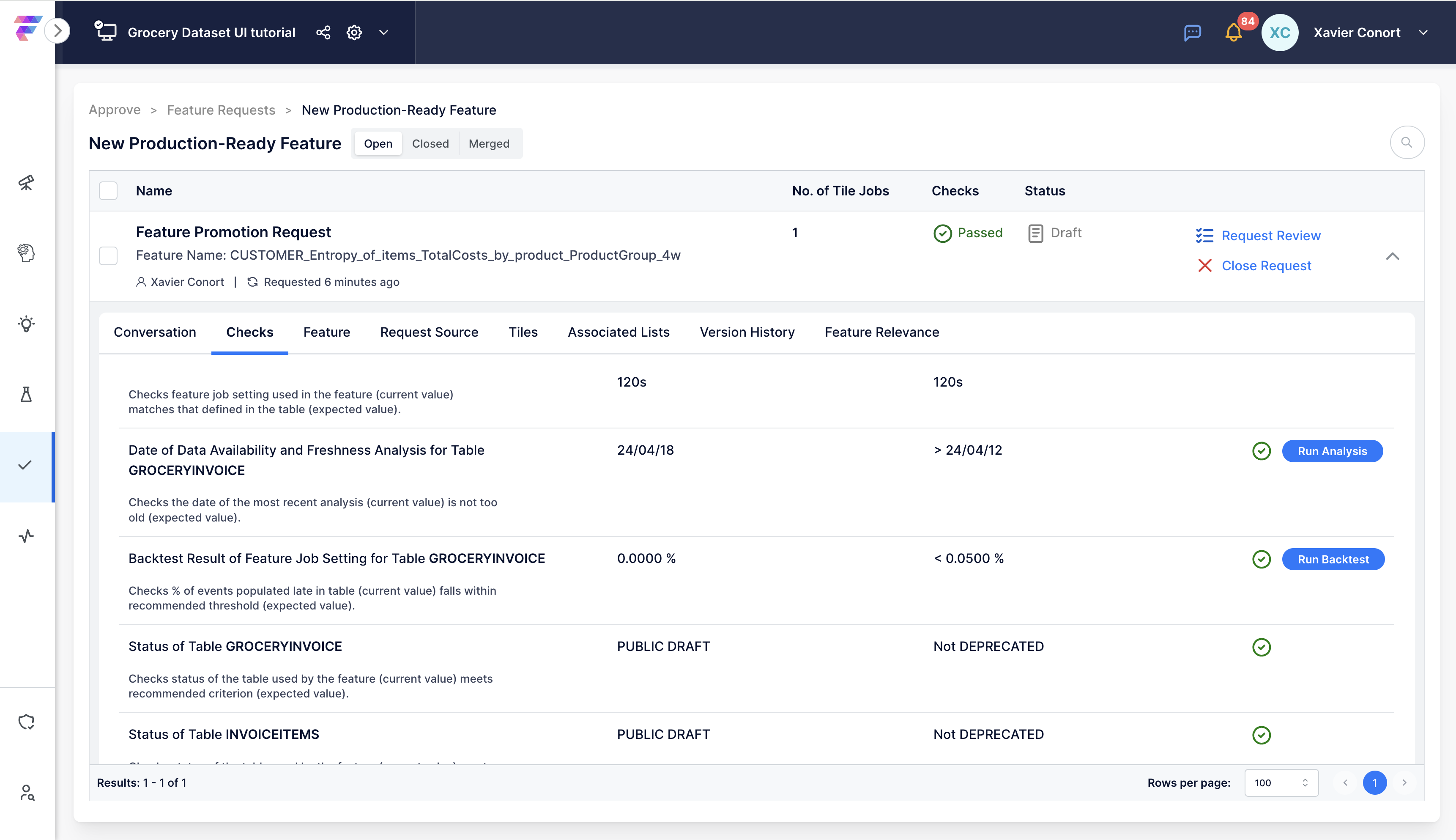

In Catalogs with Approval Flow enabled, changes in table metadata initiate a review process. This process recommends new versions of features and lists linked to these tables, ensuring that new models and deployments use versions that address any data issues.

SDK Reference

How to:

- set default cleaning operations for a column,

- create a view in a manual mode,

- create a new feature version with new cleaning operations.

User Interface

Learn by example with our 'Set Default Cleaning Operations' and 'Manage feature life cycle' UI tutorials.

Column Semantics¶

Recognizing the semantics of data fields and tables is essential for effective and reliable feature engineering. Without this understanding, there's a risk of creating irrelevant or misleading features, and missing out on key insights. Here are some examples of common errors due to misunderstanding data semantics:

- Incorrectly applying 'sum' to intensity measurements, like patient temperatures in a doctor's visit table.

- Misinterpreting a weekday column as numerical and using inappropriate operations like sum, average, or max, instead of more suitable ones like count per weekday, most frequent weekday, weekdays entropy, or unique count.

To guide users in choosing the right feature engineering techniques, FeatureByte introduces a semantic layer for each registered table. This layer encodes the semantics of data fields using a specially designed data ontology, tailored for feature engineering.

FeatureByte Copilot assists in this process for enterprise users. It uses Generative AI to analyze metadata from tables and columns and proposes semantic tags for each column. This semantic tagging is then used by FeatureByte Copilot to suggest relevant data aggregations, filters, and feature combinations during feature ideation.

User Interface

Learn by example with our 'Add descriptions and Tag Semantics' UI tutorials.

Key Numeric Aggregation Column¶

A 'Key Numeric Aggregation Column' is a crucial numeric column within a table that is invaluable for constructing aggregated features. This column usually comprises additive values like counts, sums, or durations, which are ideal for summarization tasks. It acts as a key component for aggregating metrics across different dimensions: specifically, it allows for the computation of sums across grouped categories defined by categorical columns. This aggregation is vital for deciphering patterns and trends within data subgroups. The features generated from such aggregations can be directly applied or further processed for in-depth analyses, such as evaluating diversity, assessing stability, or identifying key categories. Additionally, the 'Key Numeric Aggregation Column' enriches analyses that rely on counts by offering deeper insights into the distribution across these categories.

FeatureByte Copilot assists in the identification of these columns for enterprise users.

Examples:

Total Transaction Amount by Transaction Description

Suppose we have a dataset containing credit card transactions with columns like CardID, TransactionDescription, and Amount. By using the "Amount" column as the Aggregation Metric, we can create a feature that aggregates the total transaction amount for each distinct transaction description, per card.

| CardID | Feature |

|---|---|

| Card1 | {'Retail Purchase': 500, 'Restaurant': 300, 'Online Shopping': 700} |

| Card2 | {'Retail Purchase': 400, 'Online Shopping': 600} |

Total Count by Transaction Description

Alternatively, using counts as the Aggregation Metric can capture the frequency of transactions for each distinct transaction description, per card.

| CardID | Feature |

|---|---|

| Card1 | {'Retail Purchase': 3, 'Restaurant': 2, 'Online Shopping': 2} |

| Card2 | {'Retail Purchase': 1, 'Online Shopping': 3} |

Table Catalog¶

The Tables registered in the catalog can be listed and retrieved by name for easy access and management.

User Inferface

Entities and Relationships¶

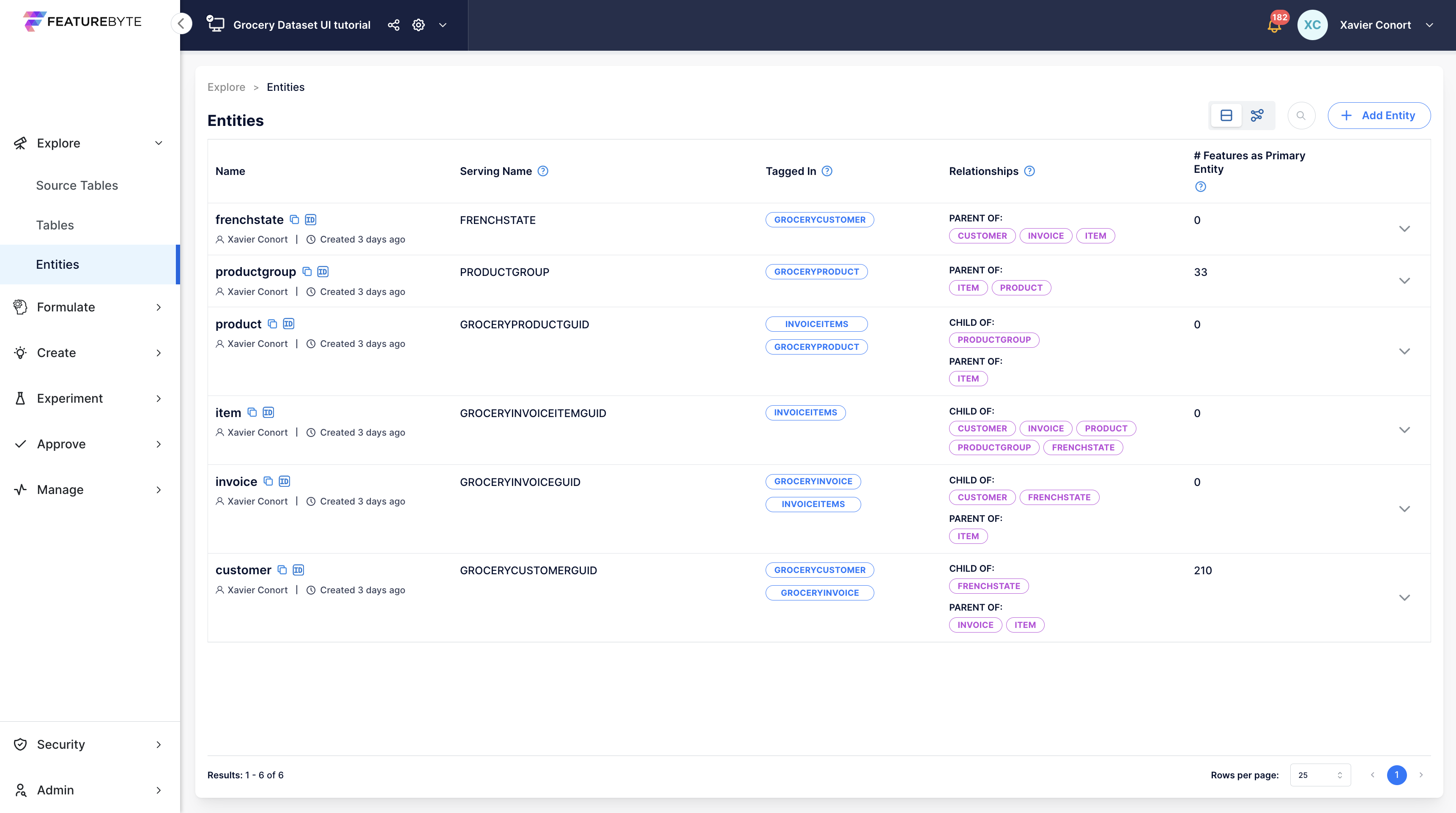

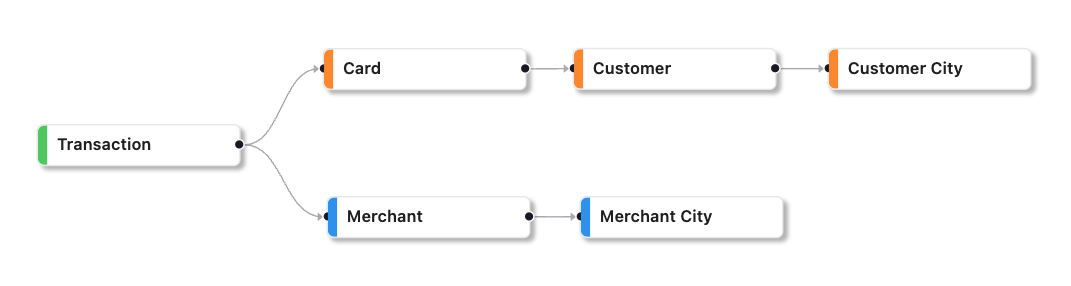

Entity¶

An Entity is a real-world object or concept represented or referenced by columns in your source tables.

Examples

Common examples of entities include customer, merchant, city, product, and order.

In FeatureByte, entities are used to:

- identify the unit of analysis for a feature or a use case

- organizing features, and feature lists in the catalog

- identifying entities that can be used to serve the feature or the feature list.

- establishing table relationships.

SDK Reference

Refer to the Entity object main page and how to add a new entity to a catalog.

User Interface

Learn by example with our 'Register Entities' UI tutorials.

Entity Serving Name¶

An Entity's Serving Name is the name of the unique identifier used to identify the entity during a preview or serving request. It is also the name of the column representing the entity in an observation set. Typically, the serving name for an entity is the name of the primary key (or natural key) of the table that represents the entity. An entity can have multiple serving names for convenience, but the unique identifier should remain unique.

SDK Reference

How to get the serving names of an entity.

User Interface

Learn by example with our 'Register Entities' UI tutorials.

Feature Primary Entity¶

The Primary Entity of a feature defines the level of analysis for that feature.

The Primary Entity is usually a single entity. However, there are cases where it may be a tuple of entities.

An example of when the primary entity becomes a tuple of entities is when a feature results from aggregatiing data based on those entities to measure interactions between them.

Example

Suppose a feature quantifies the interaction between a customer entity and a merchant entity in the past, such as the sum of transaction amounts grouped by customer and merchant in the past four weeks.

The primary entity of this feature is the tuple of customer and merchant.

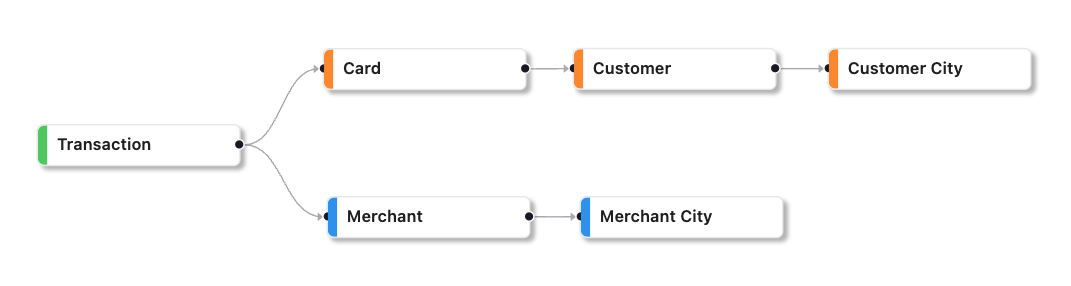

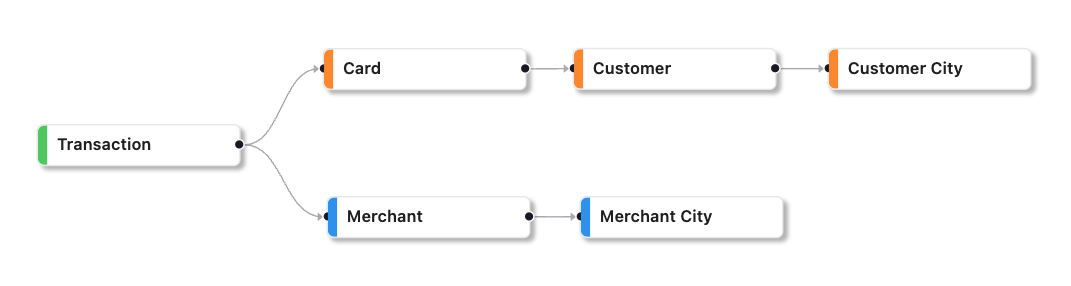

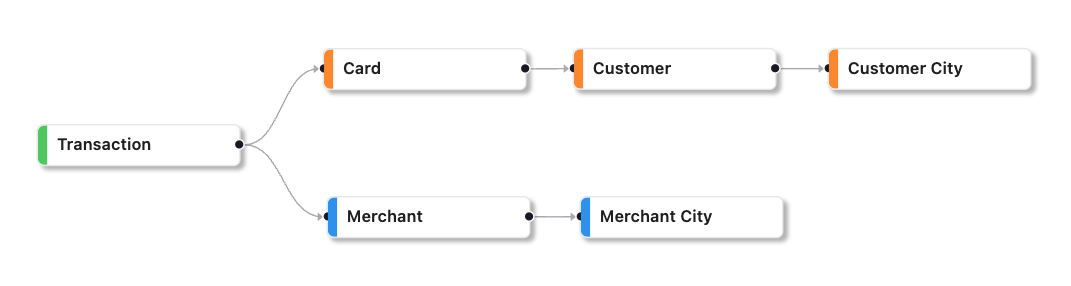

When a feature is derived from features with different primary entities, the primary entity is determined by the entity relationships between the entities. The lowest level entity in the hierarchy is selected as the primary entity. If the entities have no relationship, the primary entity becomes a tuple of those entities.

Example

Consider two entities: customer and customer city, where the customer entity is a child of customer city entity. If a new feature is created that compares a customer's basket with the average basket of customers in the same city, the primary entity for that feature would be the customer entity. This is because the customer entity is a child of the customer city entity and the customer city entity can be deduced automatically.

Alternatively, if two entities, such as customer and merchant, do not have any relationship, the primary entity for a feature that calculates the distance between the customer location and the merchant location would be the tuple of customer and merchant entities. This is because the two entities do not have any parent-child relationship.

SDK Reference

How to get the primary entity of a feature.

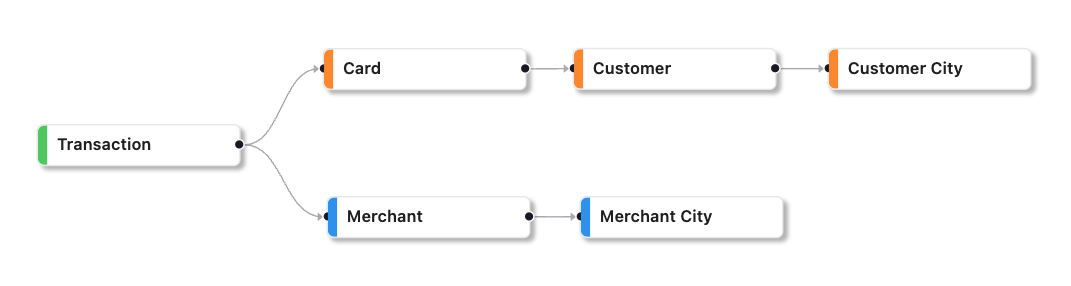

Feature List Primary Entity¶

The primary entity of a feature list determines the entities that can be used to serve the feature list, which typically corresponds to the primary entity of the Use Case that the feature list was created for.

If the features within the list pertain to different primary entities, the primary entity of the feature list is selected based on the entities relationships, with the lowest level entity in the hierarchy chosen as the primary entity. In cases where there are no relationships between entities, the primary entity may become a tuple comprising those entities.

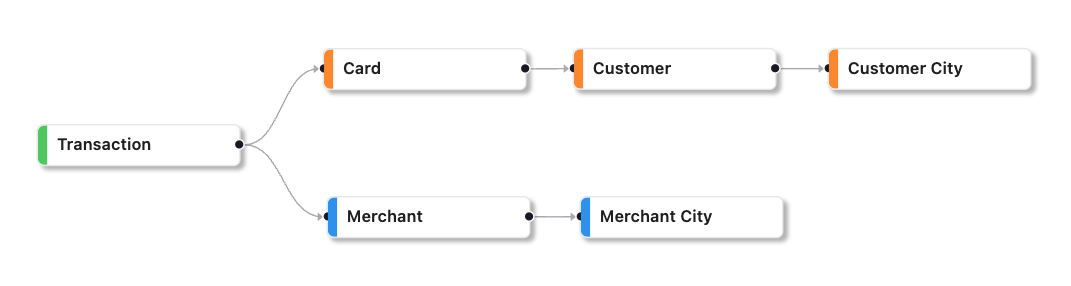

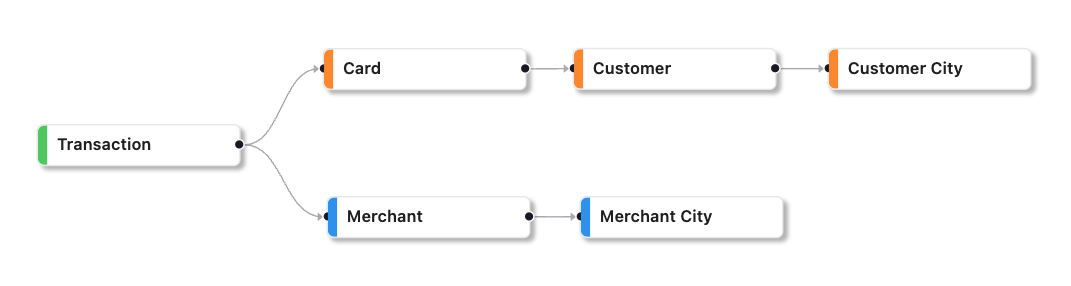

Example

Consider a feature list containing features related to card, customer, and customer city. In this case, the primary entity is the card entity since it is a child of both the customer and customer city entities.

However, if the feature list also contains merchant and merchant city features, the primary entity is a tuple of card and merchant.

SDK Reference

How to get the primary entity of a feature list.

Serving Entity¶

A Serving Entity is any entity that can be used to preview or serve a feature or feature list, regardless of whether it is the primary entity. Serving entities associated with a feature or feature list are typically descendants of the primary entity and uniquely identify the primary entity.

Example

Suppose that a customer is the primary entity for a feature, the serving entities for that feature could include related entities such as the card and transaction entities, which are child or grandchild of the customer entity and uniquely identify the customer.

Use Case Primary Entity¶

In a Use Case, the Primary Entity is the object or concept that defines its problem statement and Context. Usually, this entity is singular, but in cases such as recommendation engines, it can be a tuple of entities such as (Customer, Product).

Observation Table Primary Entity¶

An Observation Table Primary Entity is the entity of the Context or Use Case the table represents.

To utilize an Observation Table for computing historical feature values of a feature list, it's important that its Primary Entity should match the feature list's primary entity or be a related serving entity.

Entity Relationship¶

The parent-child relationship and the supertype-subtype relationship are the two main types of Entity Relationships that can assist feature engineering and feature serving.

The parent-child relationship is automatically established in FeatureByte during the entity tagging process, while identifying supertype-subtype relationships require manual intervention.

These relationships can be used to suggest, facilitate and verify joins during feature engineering and streamline the process of serving feature lists containing multiple entity-assigned features.

Important

Note that FeatureByte only supports parent-child relationships currently. Nevertheless, it is expected that supertype-subtype relationships will also be supported shortly, thus enabling more efficient feature engineering and feature serving.

SDK Reference

Refer to the Relationship object main page or to the specific links:

- list relationships between entities in a catalog.

User Interface

Learn by example with our 'Register Entities' UI tutorials.

Parent-Child Relationship¶

A Parent-Child Relationship is a hierarchical connection that links one entity (the child) to another (the parent). Each child entity key value can have only one parent entity key value, but a parent entity key value can have multiple child entity key values.

Example

Examples of parent-child relationships include:

- Hierarchical organization chart: A company's employees are arranged in a hierarchy, with each employee having a manager. The employee entity represents the child, and the manager entity represents the parent.

- Product catalog: In an e-commerce system, a product catalog may be categorized into categories and subcategories. Each category or subcategory represents a child of its parent category.

- Geographical hierarchy: In a geographical data model, cities are arranged in states, which are arranged in countries. Each city is the child of its parent state, and each state is the child of its parent country.

- Credit Card hierarchy: A credit card transaction is the child of a card and a merchant. A card is a child of a customer. A customer is a child of a customer city. And a merchant is a child of a merchant city.

Note

In FeatureByte, the parent-child relationship is automatically established when the primary key (or natural key in the context of a SCD table) identifies one entity. This entity is the child entity. Other entities that are referenced in the table are identified as parent entities.

Supertype-Subtype Relationship¶

In a data model, a Supertype-Subtype Relationship is a hierarchical relationship between two or more entity types where one entity type (the subtype) inherits attributes and relationships from another entity type (the supertype).

The subtype entity is typically a more specialized version of the supertype entity, representing a subset of the data that applies to a particular domain. Although the subtype entity inherits properties and relationships from the supertype entity, It can have its unique attributes or relationships.

Examples

Here are a few examples of supertype-subtype relationships involving a person, student, and teacher:

- Person is the supertype, while student and teacher are both subtypes of person.

- Student is a subtype of person. This is because a student is a specific type of person who is enrolled in a school or university.

- Teacher is also a subtype of person since a teacher is a specific type responsible for educating and instructing students.

- A more specific subtype of student could be a graduate student, which refers to a student who has already completed a bachelor's degree and is pursuing a higher-level degree.

- Another subtype of teacher could be a professor, typically a teacher with a higher academic rank and significant experience in their field.

Supertype-subtype relationships describe how a more general category (the supertype) can be divided into more specific subcategories (the subtypes). In this case, a person is the most general category, while student and teacher are more specific categories that fall under the broader umbrella of "person."

Entity Catalog¶

The Entities registered in the catalog can be listed and retrieved by name for easy access and management.

User Inferface

Use Case Formulation¶

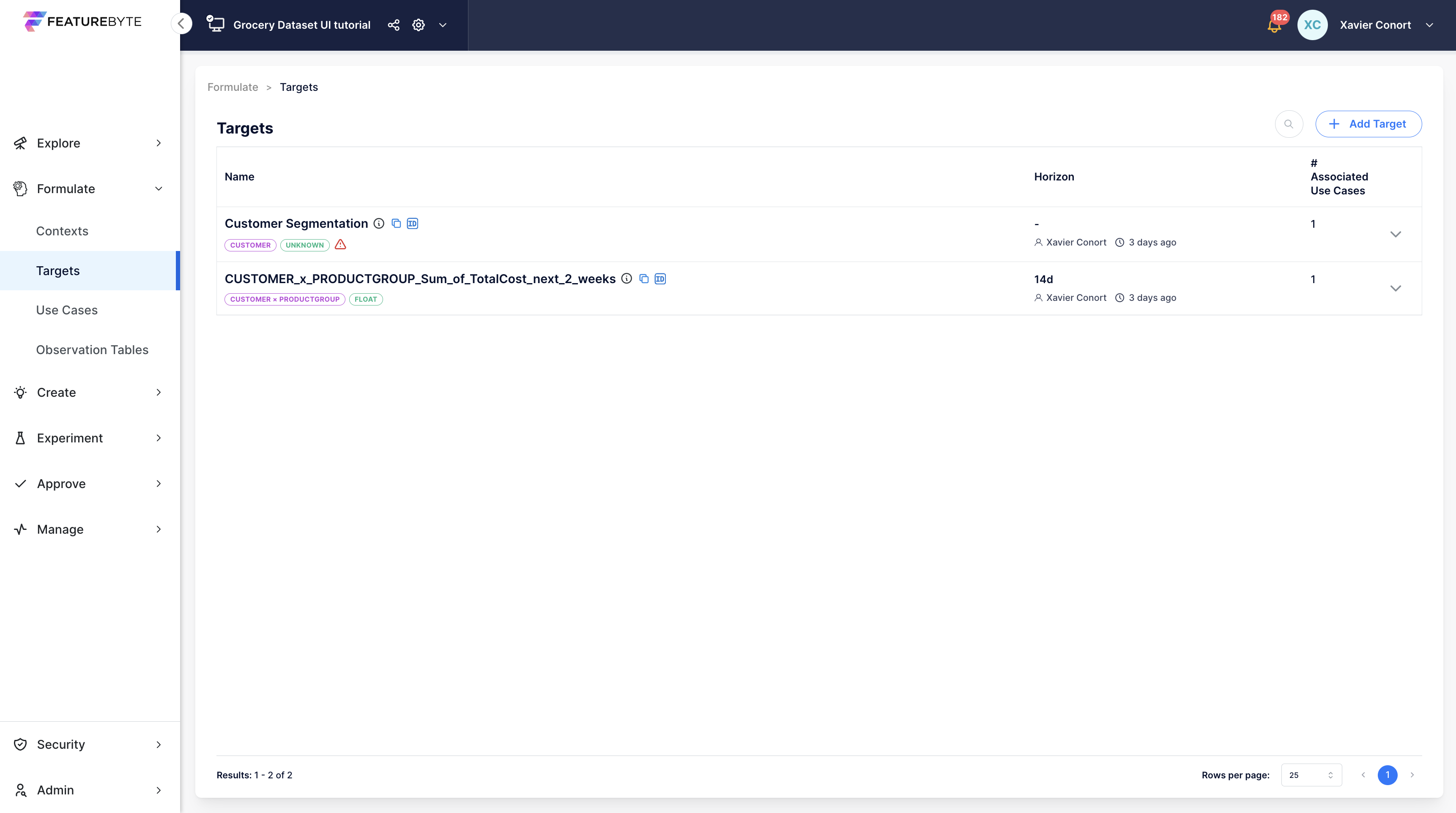

Target¶

In Machine Learning, a "target" refers to the outcome that the model is being trained to predict. It's a critical component in supervised learning, where the goal is to create a model that can accurately forecast or classify the target based on the patterns it identifies in the input features.

In FeatureByte, a target can be established in two ways:

- Descriptive Approach: You directly outline your prediction goal.

- Logical Approach: This technique calculates targets within FeatureByte, mirroring the process of creating features.

SDK Reference

Refer to the Target object main page and how to create a descriptive target

User Interface

Learn by example with our 'Create Use Cases' UI tutorials.

Target Logical Plan¶

The process for establishing a logical plan for a Target closely mirrors that for creating features, with a critical difference: the plan for a Target utilizes forward operations, in contrast to the backward operations applied in feature creation.

Target objects, built upon View objects, come in three varieties:

- Lookup Targets: Directly retrieve values from view attributes for a future point in time.

- Forward Window-based Aggregate Targets: Use forward-looking aggregations over grouped data.

- Aggregate Targets as a Future Point-in-Time: Apply aggregations at a designated future moment.

Additionally, targets can emerge as transformations of existing Target objects, offering various ways to define what you want to predict.

SDK Reference

How to:

Target Definition File¶

The target definition file is the single source of truth for a target. This file is automatically generated when a feature is declared in the SDK or a new version is derived.

The syntax used in the SDK is also used in the target definition file. The file provides an explicit outline of the intended operations of the feature declaration, including those inherited but not explicitly declared by you. These operations may include cleaning operations inherited from tables metadata.

The target definition file is the basis for generating the final logical execution graph, which is then transpiled into platform-specific SQL (e.g. SnowSQL, SparkSQL) for target materialization.

SDK Reference

User Interface

Learn by example with our 'Create Use Cases' UI tutorials.

Target Materialization¶

Materializing target values in FeatureByte using observation sets can be done through two distinct approaches:

- Using

compute_targets(): This method returns a DataFrame filled with target values, suitable for immediate analysis and use. - Using

compute_target_table(): This approach yields an ObservationTable object, representing an observation table suitable for long-term storage and linking with a Use Case for repeated use.

SDK Reference

How to:

User Interface

Learn by example with our 'Create Observation Tables' UI tutorials.

Target Catalog¶

The Targets registered in the catalog can be listed and retrieved by name for easy access and management.

User Inferface

How to list registered Targets.

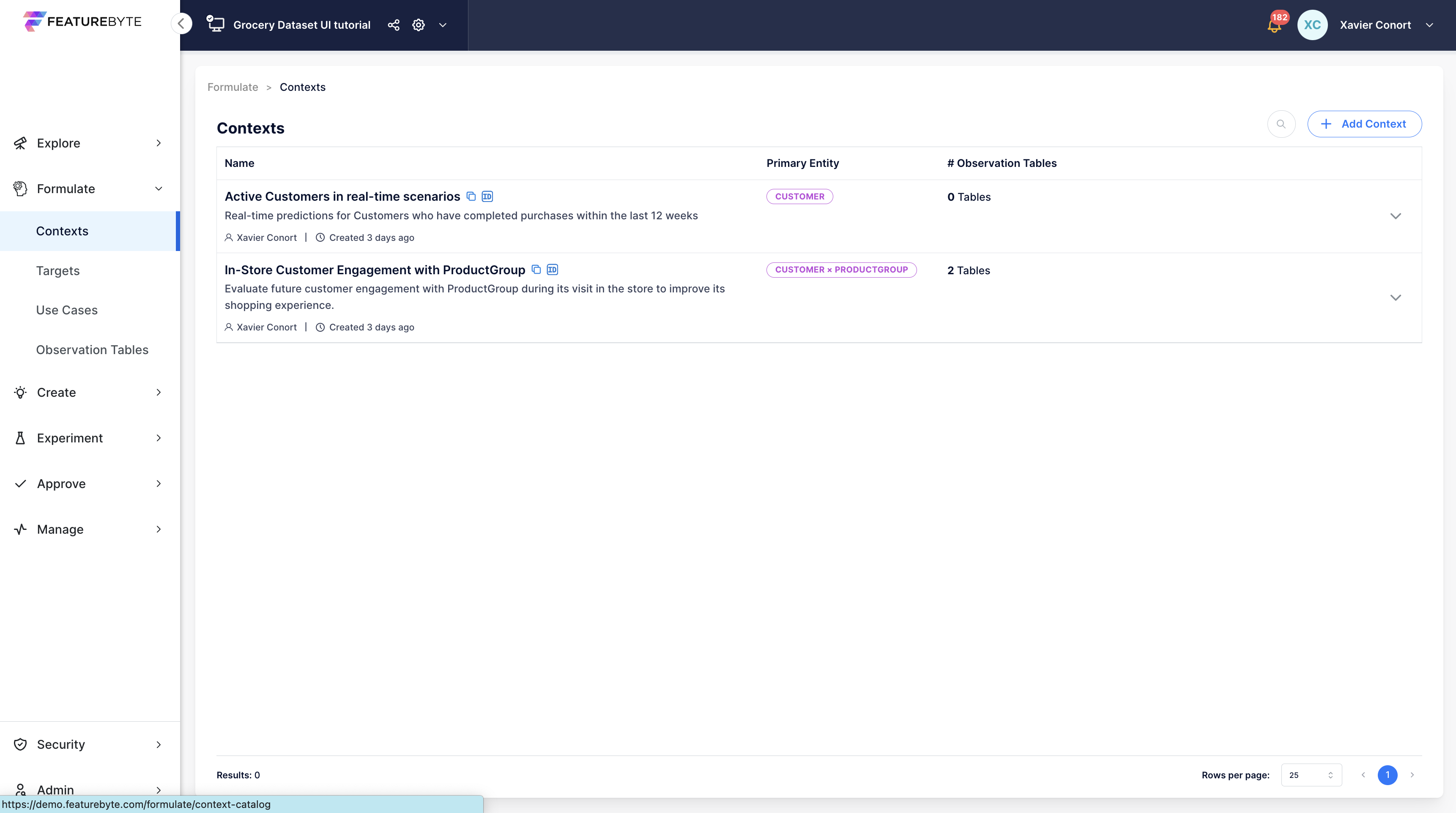

Context¶

A Context defines the scope and circumstances in which features are expected to be served.

Examples

Contexts can vary significantly. For instance:

- Batch Predictions Context: Making weekly batch predictions for an active customer that has made at least one purchase over the past 12 weeks.

- Real-Time Predictions Context: Offering real-time predictions for a credit card transaction that has been recently processed.

While creating a basic context requires only identifying the relevant entity, adding a detailed description is beneficial. This should ideally cover:

- Contextual Subset Details: Characteristics of the entity subset being targeted.

- Serving Timing: Insights into when predictions are needed, whether in batch or real-time scenarios.

- Inference Data Availability: What data is available at the time of inference.

- Constraints: Any legal, operational, or other constraints that might impact the context.

SDK Reference

Refer to the Context object main page and how to create a context.

User Interface

Learn by example with our 'Create Use Cases' UI tutorials.

Context Association with Observation Table¶

After defining a Context, it can be linked to an Observation Table. This process enables the observation table to act as the default preview/eda table for the Context. Additionally, all observation tables associated with the Context can be listed.

SDK Reference

How to:

User Interface

Learn by example with our 'Create Observation Tables' UI tutorials.

Context Catalog¶

The Contexts registered in the catalog can be listed and retrieved by name for easy access and management.

User Inferface

How to list registered Contexts.

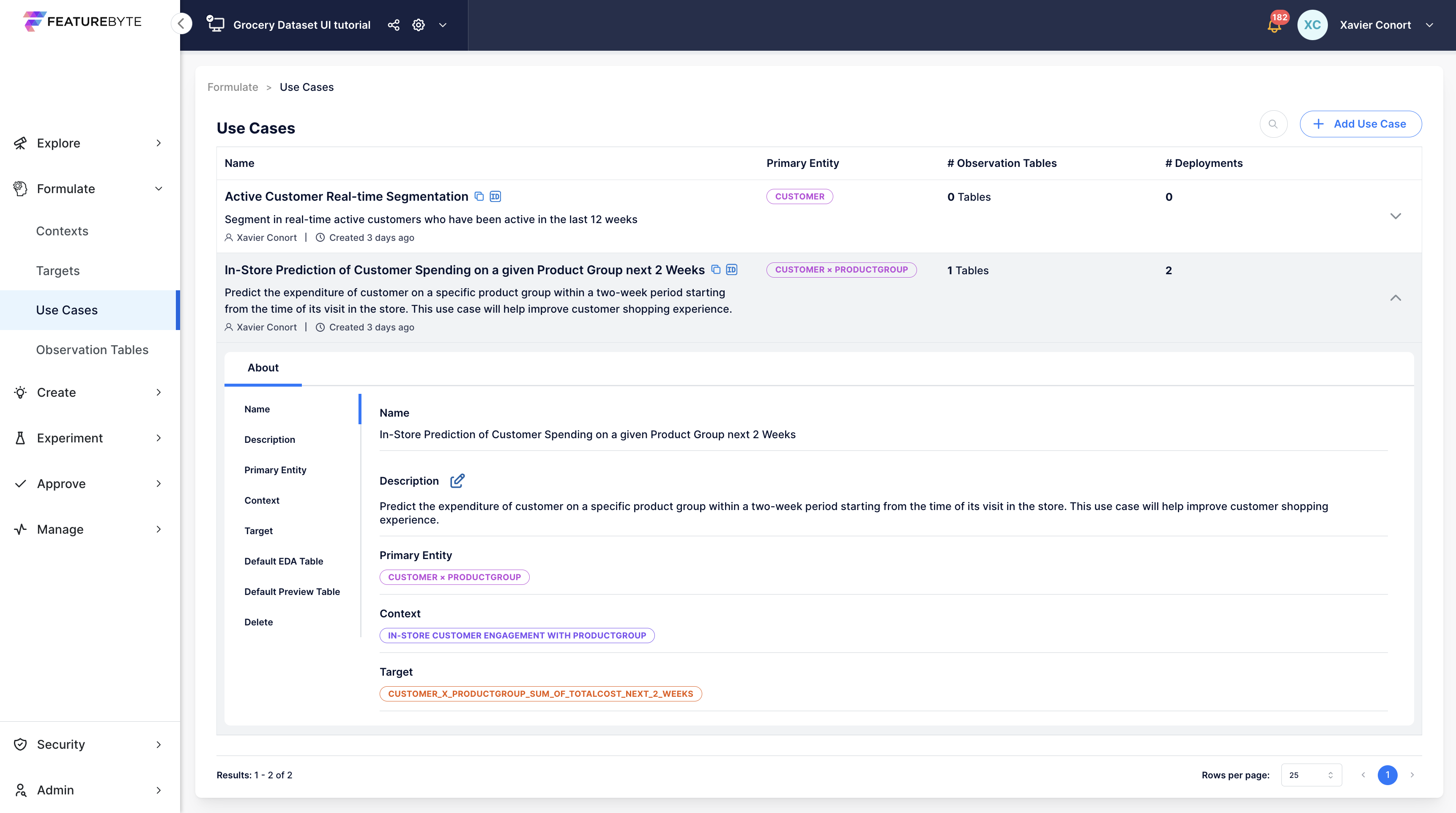

Use Case¶

A Use Case formulates the modelling problem by associating a Context with a Target. Use Cases facilitate the organization of your observation tables, feature tables and deployments. Use Cases also play a crucial role in FeatureByte Copilot, enabling it to provide tailored feature suggestions.

To construct a new Use Case, the following information is required:

-

Select a Context: Choose a registered Context that defines the environment of your Use Case.

-

Define a Target: Specify a registered Target that represents the goal of your Use Case.

Note

The context and target must correspond to the same entities.

For a comprehensive Use Case setup, include a detailed description. Providing a detailed description of the use case, context, and target ensures better documentation and enhances the effectiveness of the FeatureByte Copilot in suggesting relevant features and assessing their semantic relevance.

User Interface

Learn by example with our 'Create Use Cases' UI tutorials.

Use Case Association with Observation Table¶

Observation tables are automatically linked to a Use Case when they are derived from:

- an observation table that is linked to the use case's Context

- a target that is linked to the use case

An observation table can be manually linked to the Use Case to support cases where the observation table is not derived from another observation table.

This process enables the observation table to act as the default preview/eda table for the Use Case. Additionally, all observation tables associated with the Use Case can be listed.

SDK Reference

How to:

Use Case Association with Feature Table¶

Feature tables are automatically associated with use cases via the observation tables they originate from.

Feature tables associated with a use case can be listed easily from the Use Case object.

Use Case Association with Deployment¶

A deployment is associated with a use case when the use case is specified during the deployment of the related feature list.

Deployments associated with a use case can be listed easily from the Use Case object.

Use Case Catalog¶

The Use Cases registered in the catalog can be listed and retrieved by name for easy access and management.

User Inferface

How to list registered Use Cases.

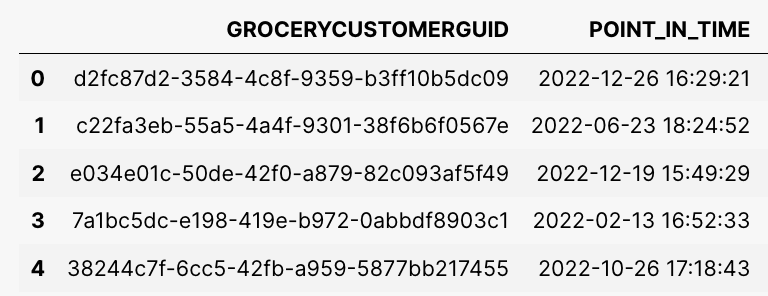

Observation Set¶

An Observation Set is essentially a collection of historical data points that serve as a foundation for learning. Think of it as the backbone of a training dataset. Its primary role is to process and compute features, which then form the training data for Machine Learning models. For a given use case, the same Observation Table is often employed in multiple experiments. However, the specific features chosen and the Machine Learning models applied may vary between these experiments.

Each data point represents a historical moment for a particular entity and may include target values.

Ideally, an observation set should be explicitly linked to a specific Context or Use Case, ensuring thorough documentation and facilitating its reuse.

Other important considerations when constructing an Observation Set are:

- Choosing the Right Entity Key Values: Select values that represent your target population accurately for each historical timestamp.

- Accuracy in Timestamps: Ensure all timestamps are in Coordinated Universal Time (UTC) and cover a sufficient range to depict seasonal changes. They should represent the expected time distribution in real-world scenarios.

- Maintaining Data Integrity: Avoid time leakage (future data in the training set) by spacing out your timestamps correctly.

Example

To predict customer churn every Monday morning over six months, you might:

- Use historical timestamps from Monday mornings of the past years

- Choose customer keys randomly from the active customer base at those times.

- Set intervals longer than six months between data points for each customer to avoid time leakage.

Technical Details

- The entity values column should have an accepted serving name.

- Label the timestamps column as "POINT_IN_TIME" and use UTC.

- In FeatureByte, an Observation Set can be a pandas DataFrame or an Observation Table object from the feature store.

Once an Observation Set is defined, you can use it to materialize a feature list into historical feature values to form a training or testing set for your Machine Learning model.

SDK Reference

How to:

Observation Table¶

An Observation Table is an observation set integrated in the catalog. It can be created from various sources and is essential for sharing and reusing data within the feature store.

SDK Reference

Refer to the ObservationTable object main page or to the specific links:

User Interface

Learn by example with our 'Create Observation Tables' UI tutorials.

Observation Table Association with a Context or Use Case¶

Once added to the catalog, an Observation Table can be linked to specific Contexts or Use Cases.

For Use Case linkage, you can include the Use Case's Target values by materializing them with a table associated with its Context.

SDK Reference

How to:

Observation Table Purpose¶

Tagging an Observation Table with purposes like 'preview', 'eda', 'training' or 'validation_test' facilitates its identification and reuse.

Default eda and preview tables can also be set for a Context or a Use Case.

SDK Reference

How to:

Observation Table Catalog¶

The Observation Tables registered in the catalog can be listed and retrieved by name for easy access and management.

SDK Reference

How to:

- list observation tables available in a catalog

- get an observation table from a catalog

- and get an observation table by its Object ID from a catalog

Views and Column Transforms¶

View¶

A view is a local virtual table that can be modified and joined to other views to prepare data before feature definition. A view does not contain any data of its own.

Views in FeatureByte allow operations similar to Pandas, such as:

- creating and transforming columns and extracting lags

- filtering records, capturing attribute changes, and joining views

Unlike Pandas DataFrames, which require loading all data into memory, views are materialized only when needed during previews or feature materialization.

View Creation¶

When a view is created, it inherits the metadata of the FeatureByte table it originated from. Currently, five types of views are supported:

- Event Views created from an Event table

- Item Views created from an Item table

- Dimension Views created from a Dimension table

- Slowly Changing Dimension (SCD) Views created from a SCD table

- Change Views created from a SCD table.

Two view construction modes are available:

- Auto (default): Automatically cleans data according to default operations specified for each column within the table and excludes special columns not meant for feature engineering.

- Manual: Allows custom cleaning operations without applying default cleaning operations.

Although views provide access to cleaned data, you can still perform data manipulation using the raw data from the source table. To do this, utilize the view's raw attribute, which enables you to work directly with the unprocessed data of the source table.

SDK Reference

Refer to the View object main page or to the specific links:

Change View¶

A Change View is created from a Slowly Changing Dimension (SCD) table to provide a way to analyze changes that occur in an attribute of the natural key of the table over time. This view consists of five columns:

- the natural key of the SCD table,

- the change timestamp, which is equal to the effective timestamp of the SCD table,

- the prior effective timestamp,

- the value of the attribute before the change occurred,

- and the value of the attribute after the change occurred.

Once the Change View is created, it can be used to generate features in the same way as features from an Event View.

Examples

Changes to a SCD table can provide valuable insights into customer behavior, such as:

- the number of times a customer has moved in the past six months,

- their previous address if they recently moved,

- whether they have gone through a recent divorce,

- if there are new additions to their family,

- or if they have started a new job.

SDK Reference

How to create a Change View from a SCD table.

Filters¶

Filters are an essential element in feature engineering strategies. They enable the segmentation of data into sub-groups, which facilitates specific operations and analyses:

- Targeted Aggregations: Filters allow for meaningful aggregations of data that would otherwise be nonsensical. For instance, transactions can be categorized by their outcomes such as "Authorized", "Approved", or "Cancelled".

- Focused Analysis: By using filters, it is possible to narrow down the analysis to specific event types and derive additional, relevant features for those types. For example, analyzing transactions by weekday may yield insightful trends for "Purchases" but may be less significant for "Banking Fees".

FeatureByte Copilot leverages Generative AI to aid enterprise users in identifying effective filters.

Within our SDK, users can manipulate data similarly to how one would use a Pandas DataFrame. It is possible to create new views from subsets of views. Additionally, a condition-based subset can be used to replace the values of a column.

View Sample¶

Using the sample method, a view can be materialized with a random selection of rows for a given time range, size, and seed to control sampling.

Note

Views from tables in a Snowflake data warehouse do not support the use of seed.

SDK Reference

How to materialize a sample of a view.

View Join¶

To join two views, use the join() method of the left view and specify the right view object in the other_view parameter. The method will match rows from both views based on a shared key, which is either the primary key of the right view or the natural key if the right view is a Slowly Changing Dimension (SCD) view.

If the shared key identifies an entity that is referenced in the left view or the column name of the shared key is the same in both views, the join() method will automatically identify the column in the left view to use for the join.

By default, a left join is performed, and the resulting view will have the same number of rows as the left view. However, you can set the how parameter to 'inner' to perform an inner join. In this case, the resulting view will only contain rows where there is a match between the columns in both tables.

When the right view is an SCD view, the event timestamp of the left view determines which record of the right view to join.

Note

For Item View, the event timestamp and columns representing entities in the related event table are automatically added. Additional attributes can be joined using the join_event_table_attributes() method.

Important

Not all views can be joined to each other. SCD views cannot be joined to other SCD views, while only dimension views can be joined to other dimension views. Change views cannot be joined to any views.

View Column¶

A View Column is a column within a FeatureByte view. When creating a view, a View Column represents the cleaned version of a table column. The cleaning procedure for a View Column depends on the view's construction mode and typically follows the default cleaning operations associated with the corresponding table column.

By default, special columns not intended for feature engineering are excluded from view columns. These columns may consist of record creation and expiration timestamps, surrogate keys, and active flags.

You can add new columns to a view by performing joins or by deriving new columns from existing ones.

If you wish to add new columns derived from the raw data in the source table, use the view's raw attribute to access the source table's unprocessed data.

SDK Reference

Refer to the ViewColumn object main page or to the specific links:

- obtain view columns info

- access raw data

- and obtain descriptive statistics for a view column.

View Column Transforms¶

View Column Transforms refer to the ability to apply transformation operations on columns within a view. By applying these transformation operations, you can create a new column. This new column can either be reassigned to the original view or utilized for further transformations.

The different types of transforms include:

Additionally, you have the option to apply custom SQL User-Defined Functions (UDFs) on view columns. This is particularly useful for integrating transformer models with FeatureByte.

Generic Transforms¶

SDK Reference

You can apply the following transforms to columns of any data type in a view:

isnull: Returns a new boolean column that indicates whether each row is missing.notnull: Returns a new boolean column that indicates whether each row is not missing.isin: Returns a new boolean column showing whether each element in the view column matches an element in the passed sequence of valuesfillna: Replaces missing values in-place with specified values.astype: Converts the data type of the column.

Numeric Transforms¶

SDK Reference

In addition to built-in arithmetic operators (+, -, *, /, etc), you can apply the following transforms to columns of numeric type in a view:

String Transforms¶

API Reference

In addition to string columns concatenation, you can apply the following transforms to columns of string type in a view:

len: Returns the length of the stringlower: Converts all characters to lowercaseupper: Converts all characters to uppercasestrip: Trims white space(s) or a specific character on the left & right string boundarieslstrip: Trims white space(s) or a specific character on the left string boundariesrstrip: Trims white space(s) or a specific character on the right string boundariesreplace: Replaces substring with a new stringpad: Pads string up to the specified width sizecontains: Returns a boolean flag column indicating whether each string element contains a target stringslice: Slices substrings for each string element

Datetime Transforms¶

The date or timestamp (datetime) columns in a view can undergo the following transformations:

- Calculate the difference between two datetime columns.

- Add a time interval to a datetime column to generate a new datetime column.

- Extract date components from a datetime column.

Note

Date parts for columns or features using timestamp with time zone offset are based on the local time instead of UTC.

Date parts for columns or features using event timestamps of Event tables, where a separate column was specified to provide the time zone offset information, will also be based on the local time instead of UTC.

SDK Reference

How to extract date components:

microsecond: Returns the microsecond component of each elementmillisecond: Returns the millisecond component of each elementsecond: Returns the second component of each elementminute: Returns the minute component of each elementhour: Returns the hour component of each elementday: Returns the day component of each element in a view columnday_of_week: Returns the day of week component of each elementweek: Returns the week component of each elementmonth: Returns the month component of each elementquarter: Returns the quarter component of each elementyear: Returns the year component of each element

Lag Transforms¶

The use of Lag Transforms enables the retrieval of the preceding value associated with a particular entity in a view.

This, in turn, makes it feasible to compute essential features, such as those that depend on inter-event time and the proximity to the previous point.

Note

Lag transforms are only supported for Event and Change views.

SDK Reference

How to extract lags from a view column.

UDF Transforms¶

A SQL User-Defined Function (UDF) is a custom function created by users to execute specific operations not covered by standard SQL functions. UDFs encapsulate complex logic into a single, callable routine.

An application of this is in computing text embeddings using transformer-based models or large language models (LLMs), which can be formulated as a UDF.

Creating a SQL Embedding UDF

For step-by-step guidance on creating a SQL Embedding UDF, visit the Bring Your Own Transformer tutorials.

SDK Reference

Refer to the UserDefinedFunction object main page or to the specific links:

- make the function available to the FeatureByte SDK,

- retrieve a UDF instance from the catalog,

Feature Creation¶

Features¶

Input data used to train Machine Learning models and compute predictions is referred to as features.

These features can sometimes be derived from attributes already present in the source tables.

Example

A customer churn model may use features obtained directly from a customer profile table, such as age, gender, income, and location.

However, in many cases, features are created by applying a series of row transformations, joins, filters, and aggregates.

Example

A customer churn model may utilize aggregate features that reflect the customer's account details over a given period, such as

- the customer entropy of product types purchased over the past 12 weeks,

- the customer count of canceled orders over the past 56 weeks,

- and the customer amount spent over the past seven days.

FeatureByte offers two ways to create features:

- Manually: Using the SDK declarative framework

- Automatically: Using FeatureByte Copilot

Feature Object¶

A Feature object in FeatureByte SDK contains the logical plan to compute the feature.

There are three ways to define the plan for Feature objects from views:

Additionally, Feature objects can be created as transformations of one or more existing features.

SDK Reference

Refer to the Feature object main page or to the specific links:

- create a Lookup feature,

- and group by entity for Aggregates and Cross Aggregates.

Lookup Features¶

A Lookup Feature refers to an entity’s attribute in a view at a specific point-in-time. Lookup features are the simpler form of a feature as they do not involve any aggregation operations.

When a view's primary key identifies an entity, it is simple to designate its attributes as features for that particular entity.

Examples

Examples of Lookup features are a customer's birthplace retrieved from a Customer Dimension table or a transaction amount retrieved from a Transactions Event table.

When an entity serves as the natural key of an SCD view, it is also possible to assign one of its attributes as a feature for that entity. However, in those cases, the feature is materialized through point-in-time joins, and the resulting value corresponds to the active row at the point-in-time specified in the feature request.

Example

A customer feature could be the customer's street address at the request's point-in-time.

When dealing with an SCD view, you can specify an offset if you want to get the feature value at a specific time before the request's point-in-time.

Example

By setting the offset to 9 weeks in the previous example, the feature value would be the customer's street address nine weeks before the request's point-in-time.

SDK Reference

How to create a Lookup feature.

Aggregate Features¶

Aggregate features are a fundamental aspect of feature engineering, essential for transforming transactional data into meaningful insights. These features are derived by applying a range of aggregation functions to data points grouped by one or more entities.

Supported aggregation functions include:

- Latest: This function retrieves the most recent value in a column for an entity. It's particularly useful for datasets where the latest information is of prime importance, such as in tracking recent user activity.

- Count Counts the number of occurrences for an entity. Useful in scenarios requiring a count of events or items, like the number of transactions per customer or the frequency of specific events.

- NA Count Tallies the number of missing data points in a column for an entity. This is particularly valuable in datasets where the presence of missing data can indicate significant trends or issues.

- Sum: Calculates the total sum of a colum values for an entity. This function is essential in aggregating numerical data, such as totaling expenditures per customer or aggregating resource usage.

- Average (Mean): Computes the mean value of column values for an entity. This function is key in finding the average or typical value, applicable in various contexts like calculating the average spending of customers or the average temperature over a period. It is also applicable in computing the mean vector of embeddings in multi-dimensional data spaces, useful in fields like natural language processing or image analysis.

- Minimum and Maximum: Identifies the lowest and highest values in a column for an entity, respectively. They are essential for understanding the range of data, such as the minimum and maximum temperatures recorded. The Maximum function is particularly useful in text embeddings to highlight the most significant features in text data.

- Standard Deviation: Calculates the measure of variability or dispersion around the mean of column values for an entity. It's significant in assessing the spread or distribution of data points.

SDK Reference

How to access the list of aggregation methods.

While leveraging these aggregation functions, it's crucial to incorporate the temporal dimension of the dataset to ensure meaningful and contextually relevant aggregations. Ignoring the temporal dimension would also lead to temporal leakage.

There are three main types of aggregate features:

Note

If a feature is intended to capture patterns of interaction between two or more entities, these aggregations are grouped by the tuple of the entities. For instance, an aggregate feature can be created to show the amount spent by a customer with a merchant in the past.

SDK Reference

How to create:

- a non-temporal aggregate feature,

- an aggregate over feature,

- an aggregate "asat" feature.

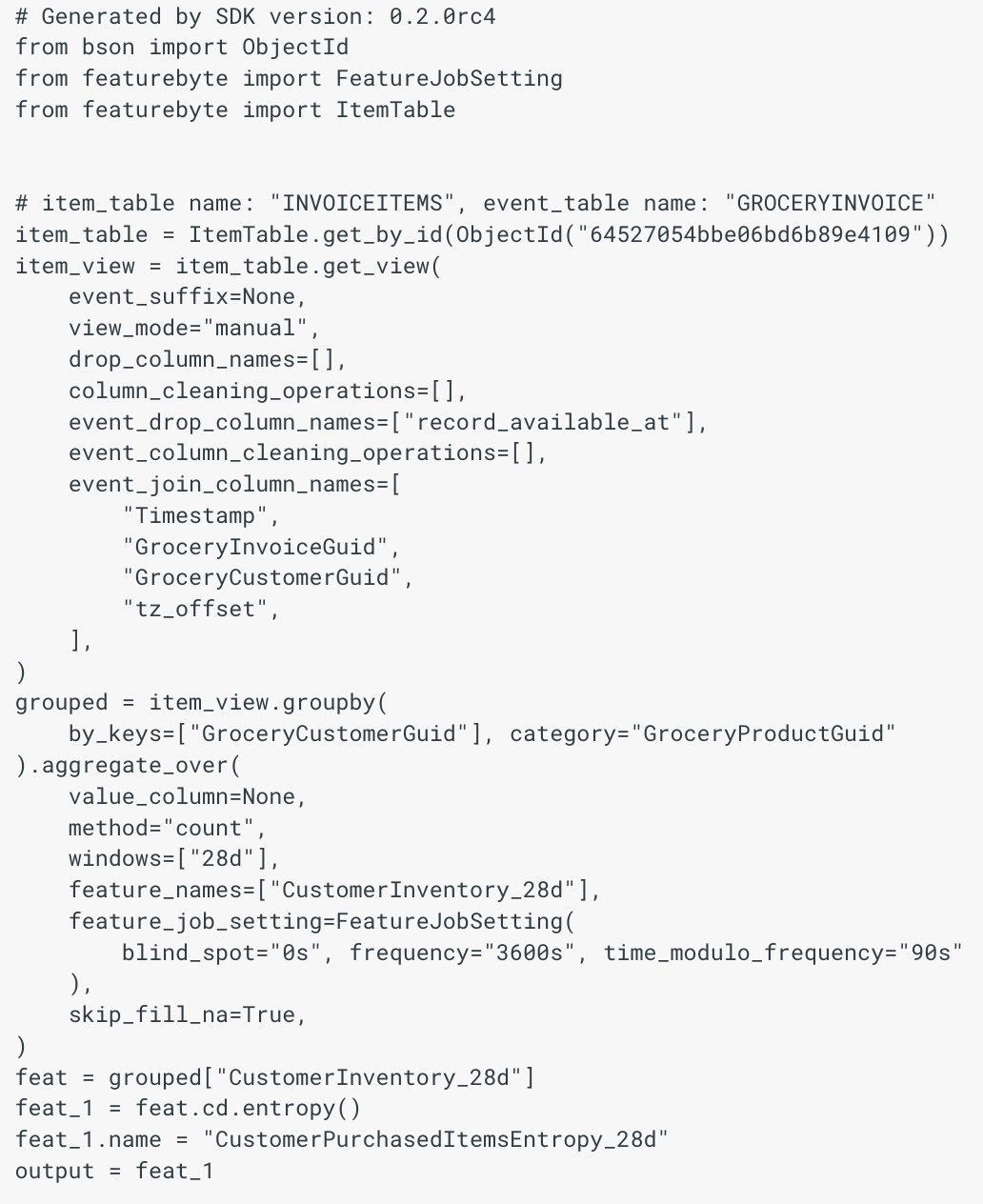

Cross Aggregate Features¶

Cross Aggregate Features in FeatureByte provide a powerful mechanism to aggregate data across categorical columns, enabling sophisticated data analysis and insight generation. This functionality allows you to categorize data into groups (a process known as 'bucketing') based on categorical column values and perform various aggregation operations like counting records within each category or summing up values of a numeric column for those categories. Beyond counting and summing, you can employ additional aggregation methods tailored to your analysis needs.

This feature facilitates advanced analytical tasks, such as:

-

Entropy Analysis: This involves assessing the entropy in data distributions that emerge from aggregating sums or counts across categories. Such analysis is crucial for understanding data variability or diversity, shedding light on the unpredictability in aspects like customer behavior or product performance.

-

Temporal and Comparative Distribution Analysis: This feature enables the comparison of category-based distributions over time or against overarching groups. It's instrumental in tracking how engagements within categories evolve over time or in relation to larger entities.

-

Identifying Key Categories: This aspect focuses on uncovering significant trends or preferences within your data. It includes:

- Identifying the most frequently occurring category, highlighting prevalent trends.

- Pinpointing categories with the highest or lowest aggregated values, such as sales or user engagement, to recognize outstanding or lagging areas.

- Aggregating values for a specific category to gain detailed insights into particular segments of interest.

-

Prevalence of Entity Attributes: This involves a multi-step process to evaluate the commonality of certain attributes within entities, such as assessing customer age bands across products. Steps might include aggregating by product across age bands, followed by a comprehensive aggregation across these bands, and then analyzing proportions to understand demographic affinities or discrepancies for specific products.

Example Use Case

Imagine analyzing customer spending habits. A Cross Aggregate feature might calculate the total amount spent by each customer across different product categories over a specified period. This aggregation offers insights into customer spending patterns or preferences, enriching understanding of behavior across various product categories.

Technical Implementation

When computing Cross Aggregate features for an entity (e.g., a customer), the outcome is typically structured as a dictionary. This dictionary's keys are the product categories engaged by the customer, with values representing total expenditure in each category. This structure effectively captures the customer's cross-category spending behavior, providing a holistic view of their purchase preferences.

Like other types of Aggregate Features, it is important to consider the temporal aspect when conducting aggregation operations. The three main types of Cross Aggregate features include:

- Non-Temporal Cross Aggregate,

- Cross Aggregate Over a Window

- Cross Aggregate "As At" a Point-in-Time.

SDK Reference

How to group by entity across categories to perform cross aggregates.

Non-Temporal Aggregates¶

Non-Temporal Aggregate features refer to features that are generated through aggregation operations without considering any temporal aspects. In other words, these features are created by aggregating values without considering the order or sequence in which they occur.

Important

To avoid time leakage, the non-temporal aggregate is only supported for Item views, when the grouping key is the event key of the Item view. An example of such features is the count of items in Order.

Note

Non-temporal aggregate features obtained from an Item view can be added as a column to the corresponding event view. Once the feature is integrated, it can be aggregated over a time window to create aggregate features over a window. For instance, you can calculate a customer's average order size over the last three weeks by using the order size feature extracted from the Order Items view and aggregating it over that time frame in the related Order view.

Aggregates Over A Window¶

Aggregates over a window refer to features generated by aggregating data within a specific time frame. These types of features are commonly used for analyzing event and item data.

The duration of the window is specified when the feature is created. The end point of the window is determined when the feature is served, based on the point-in-time values specified by the feature request and the feature job setting of the feature.

SDK Reference

How to create an aggregate over feature.

Aggregates “As At” a Point-In-Time¶

Aggregates "As At" a Point-In-Time are features that are generated by aggregating data that is active at a particular moment in time. These types of features are only available for slowly changing dimension (SCD) views and the grouping key used for generating these features should not be the natural key of the SCD view.

You can specify an offset, if you want the aggregation to be done on rows active at a specific time before the point-in-time specified by the feature request.

Example

An aggregate ‘as at’ feature from a Credit Cards table could be the customer's count of credit cards at the specified point-in-time of the feature request.

With an offset of 2 weeks, the feature would be the customer's count of credit cards 2 weeks before the specified point-in-time of the feature request.

SDK Reference

How to create an aggregate "asat" feature.

Aggregates Of Changes Over a Window¶

Aggregates of changes over a window are features that summarize changes in a Slowly Changing Dimension (SCD) table within a specific time frame. These features are created by aggregating data from a Change view that is derived from a column in the SCD table.

Example

One possible aggregate feature of changes over a window could be the count of address changes that occurred within the last 12 weeks for a customer.

SDK Reference

How to create:

- a change view from a SCD table.

- and an aggregate over feature from a change view.

Temporal Window¶

In feature engineering, a "Temporal Window" refers to a specific period over which data points are gathered and analyzed to extract valuable features for modeling. Employing multiple windows enables the capture of dynamics across short, medium, and long-term intervals within the data.

Window Size determines the duration of the temporal window (e.g., minutes, hours, days, weeks), and its selection depends on the specific use case and data characteristics.

FeatureByte Copilot assists enterprise users in identifying the most appropriate window sizes for their particular applications.

Examples

- Shop Sum of sales over the past 4 weeks.

- Total call duration by a customer over the week.

- Rolling average of heart rate variability over the last 24 hours.

- Maximum machine temperature recorded in the last 30 minutes.

Edge Effects

- At the Beginning of the Data: Ensure the starting point of your training data is after the initial table observations plus the window size. This adjustment prevents incomplete data windows at the start of the dataset.

- At the End of the Data: Set a sufficiently large blind spot in the feature job settings to account for the potential unavailability of the most recent data points due to data latency.

Feature Transforms¶

Feature Transforms is a flexible functionality that allows the generation of new features by applying a broad range of transformation operations to existing features. These transformations can be applied to individual features or multiple features from the same or distinct entities.

The available transformation operations resemble those provided for view columns. However, additional transformations are also supported for features resulting from Cross Aggregate features.

Features can also be derived from multiple features and the points-in-time provided during feature materialization.

Examples of features derived from Cross Aggregates

- Most common weekday for customer visits in the past 12 week

- Count of unique items purchased by a customer in the past 4 weeks

- List of distinct items bought by a customer in the past 4 weeks

- Amount spent by a customer on ice cream in the past 4 weeks

- Weekday entropy for customer visits in the past 12 weeks

Examples of features derived from multiple features

- Similarity between customer’s basket during the past week and past 12 weeks

- Similarity between a customer's item basket and the baskets of customers in the same city over the past 2 weeks

- Order amount z-score based on a customer's order history over the past 12 weeks

SDK Reference

How to transform the dictionary output of cross aggregate features:

get_value: Retrieves the value based on the key provided.most_frequent: Retrieves the most frequent key.unique_count: Computes number of distinct keys.entropy: Computes the entropy over the keys.get_rank: Computes the rank of a particular key.get_relative_frequency: Computes the relative frequency of a particular key.cosine_similarity: Computes the cosine similarity with another cross aggregate feature.

FeatureByte Copilot¶

FeatureByte Copilot is an AI-powered tool designed to enhance the process of feature creation.

Key Features¶

Identifying Relevant Data¶

- Data Location: Finds relevant tables and entities for specific use cases.

- Semantic Tagging: Employs Generative AI to tag data columns without semantic tags, aligning with a specialized ontology for feature engineering.

Feature Engineering Recommendations¶

- Time Window Recommendation: Suggests specific time windows for data aggregation based on the use case.

- Data Filtering Guidance: Provides advice on data filtering while considering various event types and their statuses.

- Identification of Key Numeric Aggregation Column: Recommends for each table a numeric column for constructing aggregated features across categories allowing advanced feature engineering and complement features that would solely rely on counts.

Automatic Feature Proposal¶

- Feature Proposals: Automatically proposes features post-establishment of data semantics, time periods, and filters, adhering to feature engineering best practices.

Feature Evaluation and Compilation¶

- Relevance Evaluation: Uses Generative AI to assess the relevance of features to the intended use case.

- Redundancy Check: Cross-references with existing features to prevent feature redundancy.

Feature Integration Methods¶

- Direct Catalog Addition: Offers a no-code interface for straightforward integration into the Catalog.

- Notebook Download Option: Allows downloading notebooks for detailed examination and customization.

User Interface

See FeatureByte in action in our UI tutorials: Discover and Create Features with FeatureByte Copilot.

For more in-depth information, refer to our White Paper on FeatureByte Copilot.

Feature Catalog¶

The Features registered in the catalog can be listed and retrieved by name for easy access and management.

In the SDK, features can be filtered based on two key attributes:

SDK Reference

- list features in a catalog,

- get a feature from a catalog,

Self-Organized Feature Catalog¶

FeatureByte Enterprise enhances the Feature Catalog with advanced capabilities:

- Use Case Compatibility: It ensures that only features compatible with a defined Use Case are displayed, as detailed in Feature Compatibility with a Use Case.

- Signal Type Categorization: Features are categorized by their Signal Type, facilitating easier identification and use.

-

Thematic Organization: Features are organized thematically, incorporating three key aspects:

- The feature's Primary Entity

- The feature's Primary Table

- The feature's Signal Type

In addition to basic filters, advanced filtering options in FeatureByte Enterprise include:

- Signal Type.

- Online Status.

- Production readiness.

- Feature data types.

User Interface

Learn by example with our 'Create Feature List' UI tutorials.

Feature Compatibility with a Use Case¶

In the context of a Use Case, it's crucial to ensure that the features are compatible with the Use Case Primary Entity . For a feature to be considered compatible, its Primary Entity must align with the Use Case Primary Entity in one of two ways:

- Direct Match: The feature's Primary Entity should be the same as the Use Case Primary Entity.

- Hierarchical Relationship: The feature's Primary Entity should be either a parent or grandparent of the Use Case Primary Entity.

Example

Consider the following scenario:

Use Case: Card Default Prediction. Use Case Primary Entity: Card.

Feature in Question: A feature that records the maximum distance between a customer's residence and the locations of their card transactions over the past 24 hours. Feature Primary Entity: Customer.

Analysis: This feature is compatible with the Use Case. Despite the Feature Primary Entity being 'Customer', it is directly linked to the 'Card' entity, which uniquely identifies each customer. Therefore, the feature can be effectively utilized in the Card Default Prediction Use Case.

In FeatureByte Enterprise, this concept plays a crucial role by ensuring that only features compatible with a defined Use Case are displayed in the Feature Catalog. This functionality streamlines the selection process and enhances the overall effectiveness of Use Case implementation.

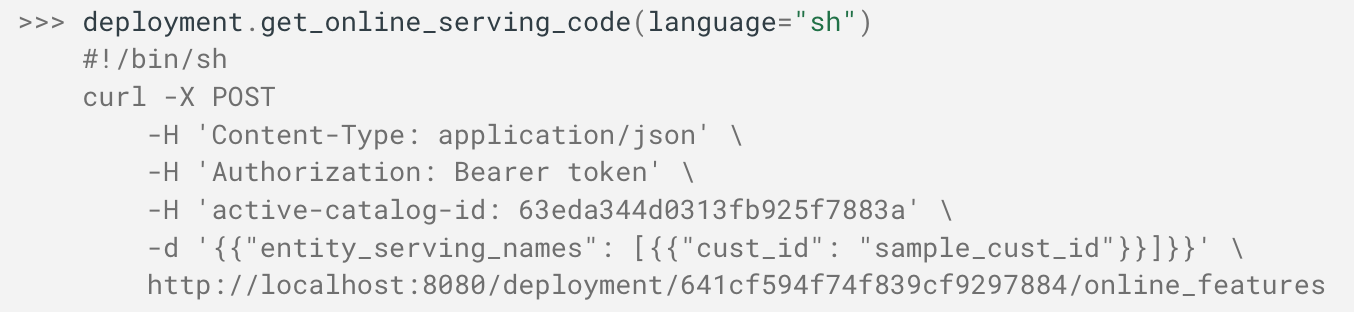

Feature Signal Type¶