Deploying using Databricks MLFlow

Introduction¶

MLFlow is an open source platform for managing machine learning life cycles. It provides experimentation and deployment capabilities. The latter allows serving models via low-latency HTTP interface, i.e. exposing any machine learning model as an HTTP endpoint. MLFlow can be deployed separately, but it also comes with Databricks, that’s why if the data warehouse is based on Databricks or if infrastructure already includes MLFlow as a component it is possible to register (deploy) to MLFlow and call from the UDF function.

In this section we will go through the steps on how to deploy any transformer model to Databricks provided MLFlow deployment, but all steps are valid for any custom MLFlow deployment as well.

Steps¶

In order to execute the following steps we will need a Databricks account with permissions to create ML clusters. We will use the SBERT Transformer model, but any other model will also work.

Steps we need to perform:

-

Create compute cluster

-

Create notebook

-

Register model in model registry

-

Verify model is available over HTTP

Create compute cluster¶

Note

This step is Databricks specific. If you are using vanilla MLFlow you can proceed to step 3 “Register model in model registry”.

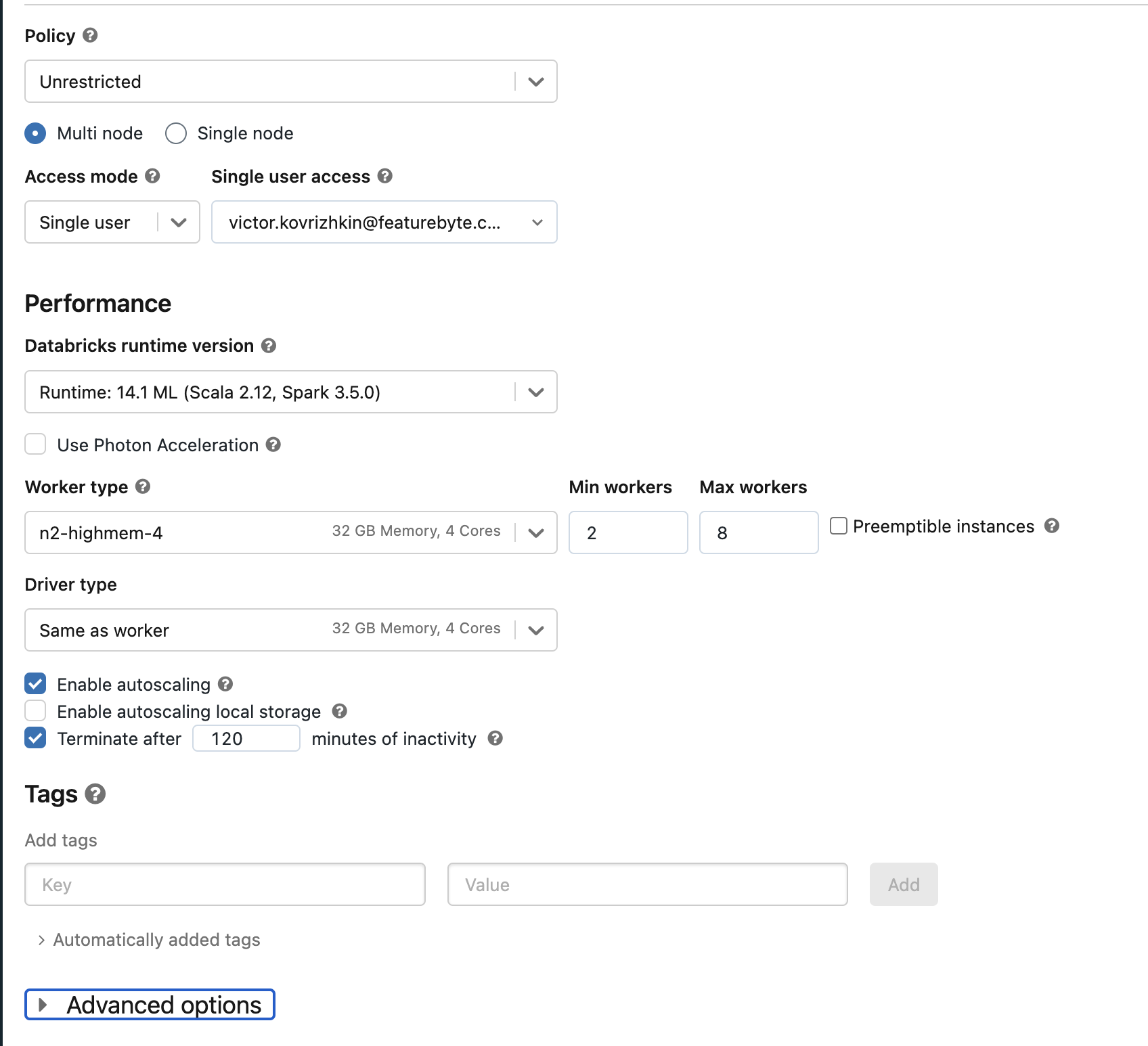

Go to compute and create ML cluster:

Make sure to pick an ML cluster in "Databrick runtime version", otherwise ML flow will not be available. Scroll down and click on the "Create Compute" button.

Create notebook¶

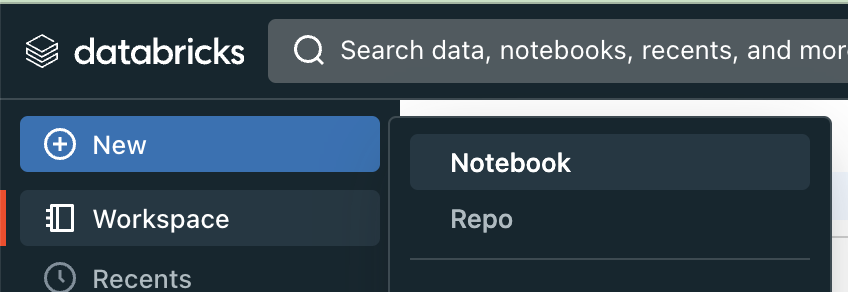

This notebook is mainly needed to register a model in the MLFlow registry and deploy it to production. If you are not using Databricks, just a Jupyter notebook or any other python script which has access to a custom MLFlow server is also fine. In Databricks you can create a notebook by clicking New -> Notebook

Register model in model registry¶

Since we are utilizing a Databricks notebook that was established in a prior step, there is no need to include MLFlow cluster connection code. If you're using a custom Jupyter notebook or Python script, it may be necessary to establish a connection with the MLFlow cluster. Typically, this connection is made using the mlflow.set_tracking_uri function, as detailed in the MLFlow documentation.

First, install required dependencies which are not pre-installed

Next, we will develop an MLFlow model wrapper. This wrapper will adapt the transformer model to meet the functional expectations of MLFlow. It will serve as the principal class for registration in the model registry and for subsequent deployment:

import pandas as pd

import mlflow

import sentence_transformers

from mlflow.models import infer_signature

from sentence_transformers import SentenceTransformer

class TransformerWrapper(mlflow.pyfunc.PythonModel):

def __init__(self):

self.model_name = "sentence-transformers/all-MiniLM-L12-v2"

def load_context(self, context : dict):

self.model = SentenceTransformer(self.model_name)

def predict(self, context : dict, model_input : pd.DataFrame):

return self.model.encode(model_input["text"])

Now, proceed to create the model, infer its input and output structure, specify the necessary pip dependencies, and register the model to the model registry:

with mlflow.start_run() as run:

model_name = "transformer-model"

registered_model_name=f"development.default.{model_name}"

model = TransformerWrapper()

model.load_context({})

pip_reqs = mlflow.pyfunc.get_default_conda_env()

pip_reqs["dependencies"][-1]["pip"] += [

"sentence-transformers=={}".format(sentence_transformers.__version__),

]

sample_inputs = pd.DataFrame({"text": ["Hello", "world"]})

log_result = mlflow.pyfunc.log_model(

model_name,

python_model=model,

conda_env=pip_reqs,

input_example=sample_inputs,

signature=infer_signature(sample_inputs, model.predict(None, sample_inputs)),

registered_model_name=registered_model_name,

)

This will produce an output similar to:

Registered model 'transformer-model' already exists. Creating a new version of this model...

Created version '3' of model 'development.default.transformer-model'.

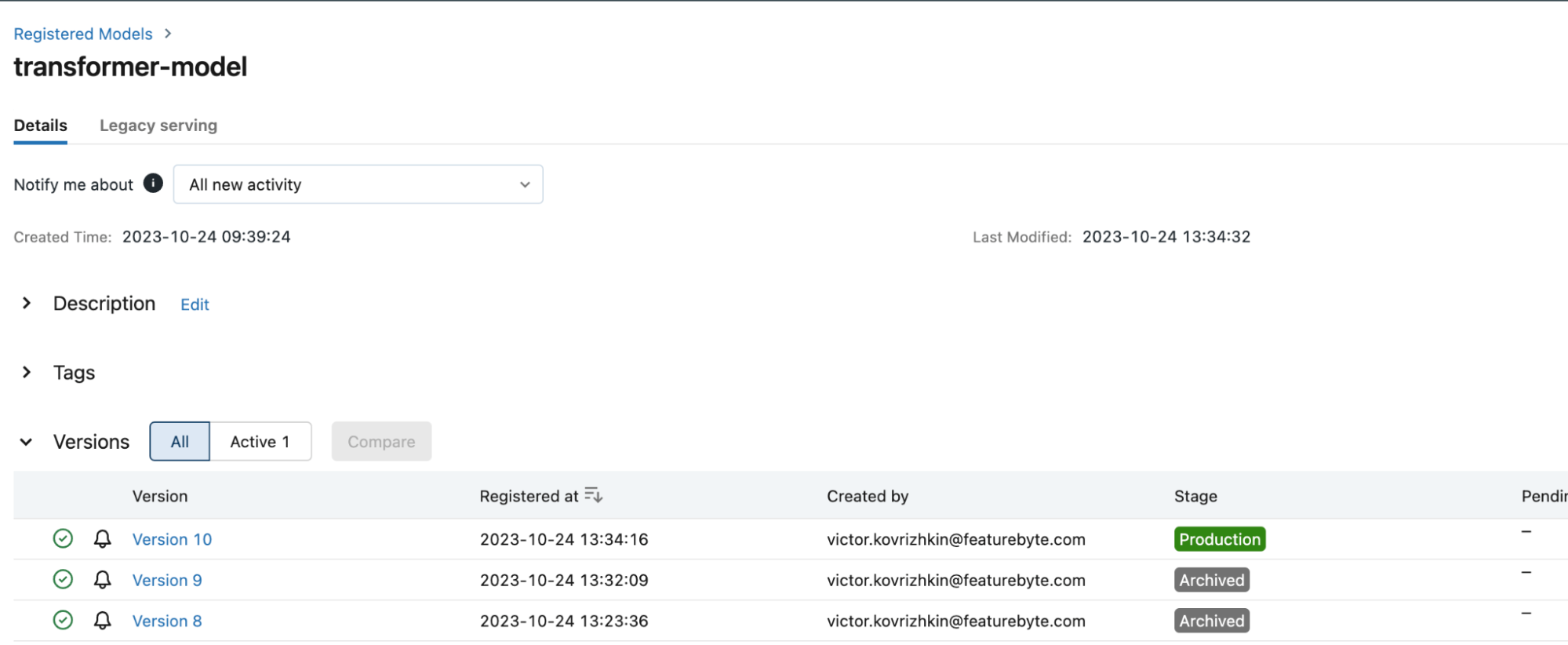

Navigate to the 'Models' section located in the left-hand menu. There, select our 'transformer-model' to view its details.

Deploy model to serving endpoint¶

Next, we will create a serving endpoint for the model:

from mlflow.deployments import get_deploy_client

client = get_deploy_client("databricks")

endpoint = client.create_endpoint(

config={

"name": model_name,

"config": {

"served_entities": [

{

"entity_name": registered_model_name,

"entity_version": log_result.registered_model_version,

"workload_size": "Small",

"scale_to_zero_enabled": True

}

],

}

}

)

Navigate to the 'Serving' section located in the left-hand menu. Select 'transformer-model' to view the details of the endpoint.

You will need to wait for some time for the container to be built and the endpoint to be ready. If the endpoint is not ready, you can check the logs to see what is happening.

Now, let’s query the endpoint using the requests library. Fill in the placeholders with your Databricks workspace URI and access token. You can generate access tokens by navigating to the 'Settings', then 'Developer', and finally the 'Access Tokens' section.

import requests

response = requests.post(

'<your_databricks_workspace_uri>/serving-endpoints/transformer-model/invocations',

headers={

'Authorization': 'Bearer <your_databricks_access_token>',

'Content-Type': 'application/json',

},

json={"inputs": {"text": ["hello", "world"]}},

)

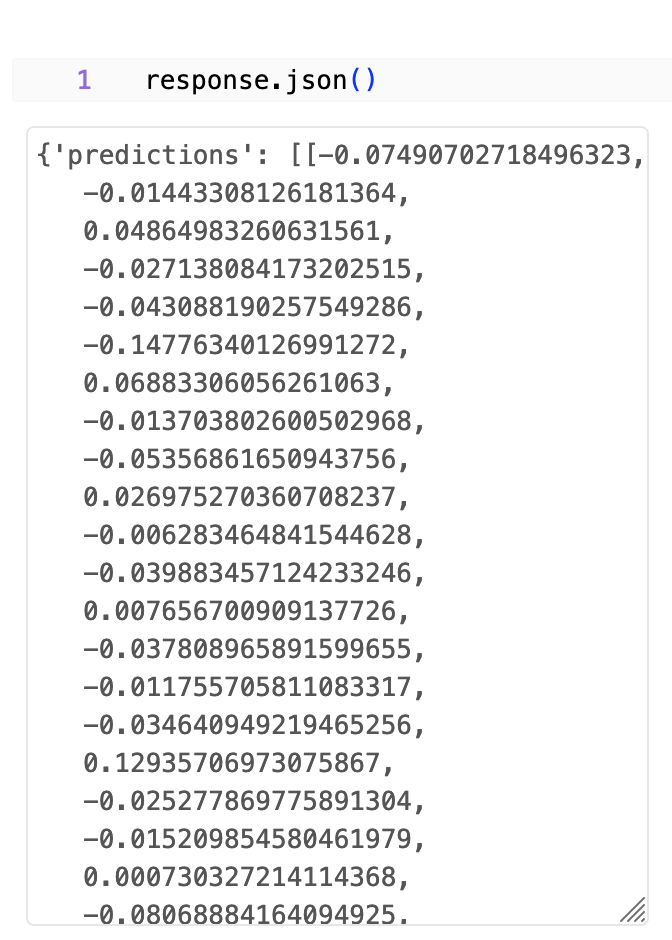

After executing this code we can check the status and response body:

We see that response status is 200 (success), and the body contains our embeddings.

Full notebook is awailable here.